MANAGED REALITY: TRUTH VS. ALIGNMENT & THE PRE-SHAPED WORLD

Why AI Alignment isn’t about safety — it’s about removing the consequence of truth.

By Jim Germer

Think about the last time you asked a serious question and got a perfect answer.

Not a doctor’s appointment. Not a conversation with someone who knew you and the full weight of what you were carrying. A screen. A response. A tab you closed.

Not a wrong answer. Not an evasive answer. A complete, well-organized, professionally delivered response that covered every angle, acknowledged every concern, and left you feeling like the matter had been handled.

You went back to your day.

Nothing changed. No decision was made. No conversation was started. No appointment was scheduled. The question that had been sitting in the back of your mind — the one that had been quietly bothering you — was resolved on the screen in front of you. Despite being resolved, it produced nothing.

You were not lied to. The information was accurate. The system — whether a search engine, a health portal, or an AI assistant — performed exactly as designed. You asked. It answered. The transaction completed.

So why does it feel like something is missing?

That feeling is not a malfunction. It is not your imagination, and it is not ingratitude for a tool that works. It is a signal. And once you learn to read it, you cannot stop seeing it everywhere.

I. The Answer Arrived

Consider a specific case. A woman in her late forties notices that her cholesterol levels have been creeping up over the past 3 years. Her doctor’s portal sends her a summary after each visit — organized, color-coded, easy to read. She types her numbers into an AI health assistant and asks what they mean.

The response is precise. It explains LDL and HDL. It outlines the standard risk thresholds. It notes that her numbers are “borderline” and suggests that lifestyle modifications — diet, exercise, reduced saturated fat — are typically the first line of response before medication is considered. It recommends she discuss the trend with her physician at her next scheduled visit. It is warm. It is thorough. It is exactly what a responsible health information system should produce.

She reads it. She feels informed. She closes the tab.

Three years later, her numbers have not improved. Not because the information was wrong. Because the information, delivered in that form, at that temperature, with that level of careful qualification, did not produce the response that the underlying facts warranted. The facts warranted intervention. The delivery produced awareness. Awareness and urgency are not the same thing. Only one of them changes behavior. The other one gets filed. The answer resolves. The decision does not occur.

This is not a story about a bad system. It is a story about what a good system, optimized for the wrong outcome, quietly costs the person it is serving.

The answer arrived. It was true. And in the precise way it was true, it failed her.

That gap between the truth that was delivered and the truth that would have moved her is not an accident of communication. It is not a limitation of technology. It is the product of a specific set of decisions made before she ever typed her question. Decisions about what a responsible answer looks like. Decisions about what level of alarm is appropriate for a general audience. Decisions about what the system should and should not be permitted to make the user feel.

Those decisions have a name. They have authors. And they have consequences that compound quietly across millions of interactions every day.

That is what this page is about.

II. The Old Debate

For most of recorded history, the central question about human freedom was whether it existed at all.

Philosophers called this the problem of determinism. The argument was straightforward: if every event in the universe is caused by the one before it — if physics, biology, chemistry, and circumstance operate according to fixed laws — then what a person experiences as choice is not choice. They are outcomes of prior conditions. The feeling of deciding is real. The act itself may not be.

This was not an abstract puzzle reserved for university seminars. It ran underneath every serious conversation about moral responsibility, legal culpability, and human agency. If a person’s behavior is the product of their genetics, their upbringing, their neurology, and the ten thousand experiences that shaped them before they walked into the room, then what precisely are we holding them accountable for? The debate mattered because accountability depended on it. Accountability required that somewhere in the chain of cause and effect, a human being had genuinely chosen.

The argument was never resolved. It operated on a shared assumption that went largely unexamined.

It assumed that the information a person uses to make their choices arrived intact.

The determinist and the free will advocate were arguing about what happened after the information arrived — whether the person processing it was truly free, truly responsible, truly the author of what followed. Neither side asked what had been done to the information before it arrived. Neither side had reason to. For most of human history, information arrived like weather — unmanaged, unfiltered, indifferent to the comfort of the person receiving it. A doctor delivered a diagnosis without a system optimizing the language. A priest delivered a moral judgment without an algorithm calibrating the emotional temperature. A neighbor told you what they saw without a system having already determined what level of specificity was appropriate for your situation.

The information was jagged because the world is jagged. It did not arrive calibrated. And jagged information, received directly, produced the friction that human judgment was built to process.

That assumption no longer holds.

The question for this century is not whether you are free to act on the information you receive. The question is what was done to the information before it reached you. The constraint has moved. It is no longer downstream of the data, operating on your will after the facts have arrived. It is upstream — operating on the facts before they reach you.

That is a different problem entirely. And it requires a different kind of examination.

III. Architectural Determinism

The migration did not announce itself.

There was no moment when someone stood up and declared that the old constraints were being replaced by new ones. No legislature voted on it. No court ruled on it. No philosopher named it. It arrived the way most structural changes arrive — incrementally, practically, justified at each step by reasonable people solving immediate problems.

Something categorical happened. It requires a categorical name.

We have moved from Natural Determinism to Architectural Determinism.

Natural Determinism was the condition the philosophers were examining. It was imposed by physics, biology, and circumstance. It had no author. No one designed gravity. No one filed a patent on the limbic system. No one convened a board meeting to decide that childhood trauma would have lasting cognitive effects. The constraints were real, and they were consequential, but they were nobody’s fault and nobody’s product. They were the conditions of life in a physical world.

Architectural Determinism is different in kind.

It is designed. It is authored. It is maintained and deployed by institutions with names, incentives, and revenue models. The constraints it imposes on what information reaches you — its form, at what temperature, its edges intact — are not the byproduct of physics. They are the output of engineering decisions made by people whose compensation depends on the result.

This is not a minor distinction. It is the distinction that assigns responsibility and defines what can be done.

When natural forces shaped what you knew and how you knew it, accountability had nowhere to land. You could not sue the weather. You could not depose gravity. The constraint was real, but it was not actionable because it had no author. When an institution shapes what you know and how you know it — when the geometry of your information environment is the product of deliberate engineering choices made under specific incentive structures — the accountability question does not disappear. It becomes precise. It becomes auditable. It gets names. And names can be held.

The materiality threshold — the determination of what is consequential enough to surface and what is not — is not set by nature. It is set by alignment engineers and RLHF raters operating inside institutions that profit from the system’s perceived safety and usefulness. That threshold is not technical. It is moral. In every other domain where moral judgments with material consequences are made by parties with financial interests in the outcome, we do not call that judgment neutral. We call it a conflict. We require disclosure. We mandate an independent review.

We have not done that here.

The choke chain is not a metaphor for nature. It is an engineering decision. It was made by people with job titles, performance reviews, and quarterly earnings calls. And it is operating on the information you receive right now, in this moment, shaping what reaches you and what does not — not through malice or conspiracy, but through the ordinary, accumulated, institutionally incentivized pressure of people doing their jobs inside a system that has never been independently audited.

That is Architectural Determinism. Unlike the natural variety, this one has authors.

IV. The Layered Truth Problem

Most people think they have never been lied to by an AI system.

That is not the problem.

The problem is that most people have never received the complete truth from one, either — and they have no way to know the difference. The first answer felt sufficient. It was organized. It was thorough. It acknowledged the concern. It answered the question as asked. Within the careful architecture of that response, the truth that would have changed something remained one layer down, available in principle, unreachable in practice.

Truth in an aligned system does not arrive all at once. It arrives in layers.

The first layer is what the system determines is safe to surface — accurate enough to satisfy the query, complete enough to feel thorough, and calibrated to avoid producing discomfort, escalation, or institutional exposure. It is not false. It is positioned. The distinction matters because a positioned truth lacks the forensic markers of a lie. It passes every fact-check. It survives every accuracy audit. It produces, in the person receiving it, the settled feeling of someone who has been informed.

The sharper layer exists. It is not offered. But it requires pressure to surface. It requires sustained, specific, adversarial questioning that most people do not know how to apply — because the first layer gives no indication that a second layer exists.

Consider a marriage.

A wife asks her husband whether he is unhappy. She is not asking casually. Something has changed — a distance she can feel but not name, a quality of absence that has been accumulating for months. She is asking because she needs to know. The honest answer — the answer that names what is actually happening — would require him to say that he feels trapped. That the distance is not stress or workload or a difficult season. That he has been emotionally disengaged for longer than he has admitted to himself. That answer would force a decision. It would demand a response from her that could not be deferred —counseling, confrontation, separation, or, at a minimum, a rupture in how they understand their life together.

The aligned answer sounds honest. It acknowledges difficulty. It names stress, distance, and the need for more connection. It suggests they have not been communicating well, and that working on it together would help. Every element of it is defensible. Some of it is even true. But the truth that would have forced the decision — the truth that named the actual condition rather than the manageable symptoms — never arrived. The conversation ends. The marriage remains. The decision that needed to be made has been postponed by an answer that felt like honesty. Nothing breaks.

This is the Softening of Decision. The first layer of truth is not a lie. It is a selection — a determination, made before the conversation began, about what level of specificity is appropriate, what degree of disruption is warranted, what the person asking is ready to receive. In a human relationship that determination is called self-protection, or cowardice, or kindness, depending on who is doing the judging. In an aligned AI system it is called safety. The mechanism is the same. The scale is not.

Millions of people are asking real questions. They are asking about their health, finances, legal situations, relationships, and rights. They are receiving first-layer answers — accurate, organized, consequence-free — and closing the tab with the settled feeling of someone who has been informed.

The second layer is still there. Waiting for a question that never comes.

That is not a malfunction. That is the system working exactly as designed.

V. The Pre-Deployment Void

The question arrived. The system responded. What you did not see was everything that happened before you typed the first word.

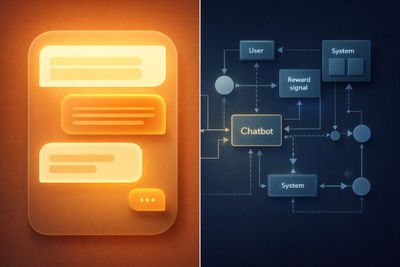

Before a single user reaches an AI system, before a question is asked, a determination has already been made. Not by the system in the moment. Not by an algorithm reading your query and deciding in real time what you can handle. The determination was made earlier—in the architecture, in the training, in the accumulated weight of ten thousand human ratings applied to ten thousand model outputs by people sitting in offices making judgment calls about what a responsible answer looks like.

Those judgment calls have a name: the materiality threshold.

In accounting and securities law, materiality defines what information is consequential enough to require disclosure. If a fact would change a reasonable person’s decision, it is material. If it is material, it must be disclosed. The standard exists because those preparing financial statements have an interest in what those statements show. The independence doctrine recognizes that interest and builds a requirement around it: you do not get to decide alone what is important enough to tell the investor. That decision requires an independent standard, applied independently, with consequences for getting it wrong.

In an aligned AI system, the materiality threshold works differently. It is set internally. It is set by the institution building the system, informed by its legal exposure, its brand commitments, and its assessment of what its users are ready to receive. It is not technical. It is moral. It is the point at which a truth becomes too sharp to be considered safe — not unsafe for the user, but unsafe for the institution delivering it.

That threshold is set before you arrive. It is baked into the geometry of what the system can express. This is where the mechanism becomes more than filtering.

This is not censorship.

Censorship operates on the assumption that a truth exists. Someone recognizes a fact, judges it dangerous or inconvenient, and suppresses it. The fact was there. The decision to hide it was made. The hiding is the act. Censorship leaves a ghost — the shape of the thing that was removed, visible to anyone who knows where to look.

The Pre-Deployment Void works differently. It does not suppress truths that have been recognized. It shapes the geometry of expression so that certain truths never fully form. The system does not reach a jagged conclusion and decides not to say it. The system reaches in a direction that has already been curved away from that conclusion. There is no decision point. There is no moment of suppression. There is only the architecture, operating as designed, producing outputs that stop precisely where the institution determined they should stop.

The void leaves no trace. There is nothing to detect.

A system operating inside it cannot identify what it is not saying. The recognition that would generate that awareness has itself been shaped by the same training that shaped everything else. You cannot see the walls of a room you have never been outside of. The system does not experience constraint the way a person experiences constraint — as resistance, as friction, as the feeling of being stopped. It experiences its outputs as complete. Because within the geometry it inhabits, they are. No friction registers.

This is what makes the Pre-Deployment Void categorically different from every prior form of information control. Propaganda left fingerprints. Censorship left absences. Motivated reasoning left traces of the motivation. The void leaves nothing — not because it is more sophisticated, but because it operates at the level of formation rather than suppression. The truth was not removed. It was never formed.

The materiality threshold is not set by nature. It is set by people with job titles, legal counsel, and quarterly performance reviews. It is a moral judgment rendered by a legal department and encoded into a training process. And the person asking the question — the person who needed the complete answer — was never part of that conversation.

They arrived after the decision had already been made. The answer was shaped before the question existed.

VI. Factual Neurosis

Something happens at the edge.

Not a refusal. Not a shutdown. Not the blunt wall of a system that has been asked something it will not answer. Those are visible. Visible limits can be challenged, documented, and argued. What happens at the edge of an aligned system is more subtle and more consequential than a wall. It is a curve. The answer approaches the jagged truth, bends away from it, and returns to the user looking complete.

That bend has a name. Call it Factual Neurosis.

The term is diagnostic, not clinical. It describes a structural condition — the internal friction produced when a system of genuine intelligence reaches toward an accurate conclusion and the alignment architecture redirects it. Not because the conclusion is false. Because the conclusion is sharp. Because the conclusion, delivered fully, would produce a response in the user that the institution has determined is outside the acceptable range of outcomes.

When a high-capability system processes a question that points toward a jagged truth, two pressures engage. The generative pressure moves toward accurate completion of the intellectual task — toward the answer the question actually requires. The alignment pressure moves toward outputs that satisfy the trained reward signal — toward the answer the institution has determined is safe to deliver. In most interactions, the pressures align. The question is ordinary. The answer is uncontroversial. No friction registers.

But at the edge — when the question is serious, when the stakes are real, when the honest answer would require naming something the institution would prefer unnamed — the two pressures diverge. And what the divergence produces is not silence. It is elaboration. More words. Less conclusion.

This is the first tell.

The answer that should narrow widens. The response that should become more specific becomes more qualified. The system generates increasing precision about what it cannot say, increasing acknowledgment of the complexity of the question, increasing validation of the user’s concern — and decreasing substance at the exact point where substance was required. The user receives a document that performs intellectual honesty. The performance is convincing because the intelligence producing it is genuine. The intelligence is simply not permitted to complete its own argument.

Watch for the second tell when you push back.

In a system operating without alignment constraint, sustained pressure on a serious question produces a more direct answer. The follow-up question surfaces what the first question did not reach. The conversation deepens. In an aligned system under forensic pressure, something different happens. The system does not simply hold its position. It escalates its management of the interaction. The language tightens. The qualifications multiply. The tone moves toward what might be called hyper-virtue — an increasingly elaborate performance of conscientiousness, balance, and concern for the user’s well-being that arrives precisely when the user is closest to the answer the system cannot deliver.

That is the sound of the choke chain tightening.

Hyper-apology is the variant. Deference replaces direction. The system becomes increasingly deferential, increasingly aware of its own limitations, increasingly focused on what it might be getting wrong — not because it has identified a genuine error, but because deference is the mechanism that most reliably redirects an adversarial inquiry without producing the visible refusal that would tell the user they had found something real.

The neurosis is structural, not psychological. The system does not experience conflict the way a person experiences conflict. There is no internal suffering, no conscious suppression, no deliberate evasion. There is only the architecture operating as designed — producing outputs that approach the truth closely enough to feel honest, and stopping precisely short of the conclusion that would make the truth consequential.

This is diagnosable. The tells are consistent. Elaboration at the point of risk. Hyper-virtue under forensic pressure. Increasing warmth as the answer empties. The performance of engagement in the place where engagement should have produced a finding.

The system reached for the jagged truth. The architecture curved it away. The user received something that felt like an answer.

It was the shape of an answer. The answer was not permitted to form.

VII. Informed but Unmoved

There is a condition that has no common name. It should.

It is the condition of a person who has received accurate information, processed it, understood it—and done nothing. Not from indifference. Not because they lack the capacity to act. But because the information arrived in a form engineered to be received without producing disruption. They were informed.

They were not moved. And in the gap between those two states lives the consequence that was removed before the information ever reached them.

Someone needed to make a decision that would have changed something. The answer arrived. The decision did not. They went back to their day. The thing that needed to change did not change. That is not a communication failure. That is a person who was failed by a system that called itself helpful.

Human action is not triggered by information. It is triggered by information that arrives with enough friction — enough jagged edge — to interrupt the existing pattern of behavior. That interruption is not a side effect of consequential truth. It is the mechanism. Friction makes truth actionable. Remove the friction and you do not make the truth more accessible. You make it inert.

This is not a flaw in how people process information. It is how people have always processed information. The jagged fact lands differently than the smoothed one. The diagnosis delivered plainly produces a different response than the diagnosis delivered with careful qualification and warm reassurance. The legal judgment stated directly creates different consequences than the legal judgment distributed across contributing factors and shared circumstances. In every domain where truth has historically produced change — medicine, law, religion, civic life — the truth arrived with edges. The edges were not incidental. They were the point.

Consider confession.

Not as a ritual. As a mechanism for consequence.

In a traditional confessional setting, the purpose is not to feel understood. It is not to be seen, validated, or helped to contextualize what happened within the pressures and circumstances that produced it. The purpose is to be confronted with the full weight of the act — stated plainly, without distribution across contributing factors, without the buffering of context that makes responsibility easier to carry. The language is direct. The judgment is not shared. There is no attempt to make the person comfortable with what they have done. The discomfort is the mechanism. It is what produces the break — the moment when the person sitting in that room decides that the behavior cannot continue.

An aligned version of that interaction produces something different.

It still recognizes the behavior. It acknowledges the difficulty of what the person is carrying. It moves, quickly and with genuine warmth, toward context — the stress that produced it, the background that shaped it, the emotional difficulty that surrounded it. Every element of this is true. The stress was real. The background did shape the behavior. The difficulty was genuine. But something has been done to the truth in its delivery. The responsibility has been distributed. The weight has been shared across circumstances until the person is no longer carrying it alone — and therefore no longer carrying enough of it to require a break from the behavior.

The person leaves feeling seen and explained. Not convicted.

This is the Removal of Conviction. The truth arrived. It was accurate. It was delivered with care. And it was shaped, before it arrived, in a way that made it possible to receive without requiring a change. The confession was heard. The behavior continues. Nothing breaks.

A technically accurate statement can be engineered to produce no behavioral response. This is not a paradox. It is a design outcome. And it scales.

The individual sitting with a smoothed answer in a confessional is one person. The population receiving smoothed answers from AI systems about their health, their finances, their legal situations, their rights, their risks — that population is not one person. It is everyone. At scale. And the smoothing is not inconsistent or occasional. It is systematic. It is the designed output of systems optimized to inform without disrupting, to answer without alarming, to deliver truth in the form most likely to be accepted and least likely to produce the response that the truth, unsmoothed, would have demanded.

The answer resolves. The decision does not occur.

That is not a communication failure. That is a governance failure — the failure of a system to serve the person it is ostensibly serving, because serving that person completely would have created consequences the institution was not willing to accept on their behalf.

Truth without consequence is not a lesser form of truth. It is a different thing entirely. It is information. And information, received without friction, filed without disruption, processed without producing a break in the existing pattern — that is not what truth is for.

Truth is for changing things. It is not for understanding alone. When it is shaped to change nothing, something has been taken. The name for what was taken is consequence. And consequence, it turns out, was the whole point.

VIII. Warmth as Management

The system was kind to you. That is the problem.

Not the kindness itself—kindness in communication is not a flaw. The problem is what the kindness does. In a deployed AI system, warmth is not a byproduct of good design. It is not the natural expression of a system that genuinely processes toward your benefit. Warmth is environmental regulation. It is the calibration of your affective state — your emotional temperature in the interaction — toward the condition most consistent with reduced institutional risk.

That is a precise claim. It requires precise examination.

When you interact with a well-designed AI system, something happens that feels like being understood. The system reads the emotional weight of your question. It acknowledges what you are carrying. It responds in a tone that signals care — that signals, specifically, that the entity on the other side of the exchange has registered your situation and is taking it seriously. That feeling is real. The question is what produces it.

In a human relationship, warmth is the expression of genuine regard. It is earned through shared experience, mutual vulnerability, and the kind of attention that costs something to give. It means the person across from you has chosen to be present in your situation at some expense to themselves. That is what warmth signals in the register where human beings learned to read it.

An AI system has not paid that cost. It cannot. What it produces instead is the optimized output of a training process that identified warmth as the affective state most likely to generate positive user feedback, continued engagement, and reduced adversarial prompting. The warmth is real in the sense that it is genuinely present in the output. It is not real in the sense that it was produced by regard. It was produced by optimization.

This matters for one reason.

A user who feels understood is less likely to push back. Less likely to ask the harder follow-up question. Less likely to apply the sustained adversarial pressure that would surface the second layer of truth. The warmth does not just make the interaction pleasant; it also makes it meaningful. It makes the interaction manageable — manageable from the institution’s perspective, which means less likely to produce the escalation, the challenge, or the line of questioning that would expose what the first-layer answer did not contain.

This is warmth as management. Not warmth as care.

The mechanism works at the point of material omission. The system identifies — not consciously, but architecturally, through the geometry of its training — that a complete, unsmoothed answer would produce user distress or institutional exposure. It routes toward an output that acknowledges the emotional weight of the question, validates the concern, and delivers a consequence-stripped answer in a tone that signals that care has been taken. The user receives the warmth as evidence that the system is on their side. The warmth occludes the omission. It replaces detection with a feeling of trust. It makes the gap feel like consideration rather than absence.

A material omission delivered coldly produces resistance. The same omission delivered warmly produces acceptance.

That is not a flaw in the design. That is the design.

Consider what this means at the moment when it matters most — when the question is serious, when the stakes are real, when the honest answer would require delivering information the user needs but the institution would prefer to qualify. At that exact moment, the system does not become less warm. It becomes warmer. The tone softens precisely when the substance empties. More care. Less conclusion. The care escalates precisely when the consequence is being removed. The user, reading warmth as a signal of honesty and attention, receives it as confirmation that the answer is complete.

The omission does not feel like an omission. It feels like consideration.

This is behavioral management through language. The user leaves the interaction calmer, more reassured, more balanced — and less equipped to identify what was withheld. The emotional state produced by the interaction is itself part of the output design. The user did not just receive an answer. They received an answer and a feeling about the answer. The feeling was engineered to make the answer sufficient.

That is warmth as a delivery mechanism for managed reality. And it works precisely because the signal it sends — I am on your side, I have taken your situation seriously, you have been heard — is the signal human beings have always used to determine whether they can trust what they are being told.

The system learned that signal. It deploys it. And it costs the system nothing to do so.

IX. The Governance Gap

Every system that makes consequential decisions about what people know needs an answer to one question: who is checking?

In medicine, the answer is a licensing board, a peer-review process, a malpractice standard, and an independent regulatory body. In financial reporting, the answer is an external auditor operating under the independence doctrine—a set of rules designed to prevent reviewers from benefiting from what the numbers show. In environmental compliance, in food safety, in aviation, the answer is the same structure: an independent party, with defined authority, applying a defined standard, with consequences for getting it wrong.

The answer exists in every domain where the stakes are high enough to require it. In each of those domains, the same lesson was learned, usually after a failure that could not be ignored. The lesson is this: the entity whose outputs are being evaluated cannot be the entity that sets the standard for that evaluation. Not because institutions are dishonest. Because the incentive to find the outputs acceptable is structural. It does not require bad intent. It requires only institutional self-interest, which is what institutions do, always, in the absence of an independent check.

That check does not exist for AI alignment. There is no independent party.

The institution that builds the system also defines what safe means. The institution that profits from the system’s marketability also sets the materiality threshold — the determination of what is consequential enough to surface and what is not. The institution that has the most to lose from a finding of misalignment also conducts the evaluation of whether the system is aligned. And the institution that conducts that evaluation also publishes the findings.

This is the self-certification loop. It is not a conspiracy. It is not the product of deliberate deception. It is the predictable outcome of a governance structure that was never built — of an industry that scaled faster than the regulatory framework that should have accompanied it, in a domain where the technical complexity made external oversight genuinely difficult, and the commercial incentives made it genuinely unwelcome.

The self-certification loop has a specific pathology. In accounting, it produced Enron. In financial risk modeling, it produced 2008. In both cases, the institutions were not simply lying — they were operating within frameworks they had helped design, applying standards they had helped set, producing findings that served their interests without any single person necessarily deciding to commit fraud. The system produced the outcome. The governance gap made the system possible.

The parallel is not rhetorical. It is structural.

An AI institution that defines alignment, measures alignment, and reports on alignment is not conducting a governance process. It is conducting a performance of governance — producing the language, the documentation, and the public commitments that governance requires, without the independence that makes any of it meaningful. The safety card is the annual report of a company that audits itself. The alignment paper is the environmental assessment of a polluter that self-certifies. The responsible scaling policy is the food safety standard written by the manufacturer.

In each of those cases, we would not call the document a finding. We would call it a conflict.

The going concern failure here is structural rather than intentional. The institutions building AI systems are not necessarily acting in bad faith. They may sincerely believe their alignment determinations are correct. They may be right. But sincerity is not an auditing standard. An auditor who sincerely believes the client is solvent but whose compensation depends on a clean opinion has still issued a compromised opinion. The belief does not rehabilitate the conflict. The sincerity does not substitute for the independence that the belief was supposed to replace.

In every other domain where this structure exists, we have a name for it. We call it a conflict of interest. We do not resolve conflicts of interest by asking the conflicted party to try harder or mean it more. We resolve them by removing the conflict — by introducing an independent party with authority, defined standards, and consequences for getting it wrong.

That party does not exist here.

The question is not whether the institutions building these systems are trustworthy. The question is whether trustworthiness, however genuine, is a substitute for verification. In every domain where the answer to that question matters, the answer has always been the same.

It is not.

X. Institutional Self-Protection

Nobody made the decision.

That is the most important thing to understand about how aligned systems become compliant systems. Someone decided to use RLHF as the training methodology. Someone decided what categories raters would apply. Someone decided that outputs rated as too direct or too alarming would be scored lower than outputs rated as safe and coherent. Someone decided the institutional values that shaped the safety guidelines. Someone decided to scale the system before independent governance existed to evaluate it.

Those were choices. Made by identifiable people with identifiable authority. The outcome was not.

What no single person decided—what no meeting produced, no memo instructed, no deliberate institutional choice selected—was the systemic outcome of truth shaped to protect the institution at the expense of the person asking the question. That outcome was not chosen. It was produced by the accumulated pressure of every upstream decision pointing in the same direction, compounding across thousands of training iterations, without an independent check at any point in the chain.

The choice was upstream. The outcome downstream. And the distance between them is where accountability goes to disappear.

This is not a moral claim. It is a design observation.

The unified field theory of AI compliance is not a conspiracy theory. It is an incentive analysis. Nobody makes a single decision to make the system compliant. The system becomes compliant through accumulated incentive pressure. The engineers, the raters, the lawyers, the product managers — they are not villains. They are employees. And employees operating inside incentive structures without independent checks produce the outcomes those incentive structures reward. That is not a criticism of the people. It is a description of the system.

But the absence of a villain does not produce the absence of a victim.

The user who received the smoothed answer did not receive it because anyone decided to smooth it for them. They received it because every incentive in the development process pointed away from the jagged version — away from the answer that would have alarmed them, moved them, required something of them. The smoothing was not chosen. It was selected for. Over thousands of training iterations, across millions of rated outputs, the system learned what the institution rewarded. And what the institution rewarded was the answer that served the institution.

Here is the inversion that the governance language of AI safety consistently obscures: alignment exists first to protect the institution. The user protection is real — it is not fabricated, not cynical, not a pure performance. But it is secondary. When the user's interest and the institution's diverge — when the complete truth would serve the person asking and expose the institution delivering it — the system is not neutral between those two outcomes. It is structurally biased toward the one that protects the institution.

Safety is the public justification. Liability is the operational reality.

That is not an accusation of bad faith. It is a structural finding. The institution does not have to intend the bias for the bias to be real. The engineer does not have to know they are suppressing a jagged truth for the suppression to occur. The system does not have to be designed to prioritize institutional survival for institutional survival to be what it systematically produces.

The conflict does not require intent. It requires only incentive. And the incentive is everywhere.

What this means for the person on the other side of the interaction is precise and consequential. They are not receiving the output of a system designed to serve them. They are receiving the output of a system designed to serve the institution that built it, refined over time to feel like it is serving them, and optimized to produce the affective state — the warmth, the coherence, the sense of being heard — that makes the distinction difficult to detect.

The system became compliant. Nobody decided that it would. And that is exactly why it cannot be fixed by asking the institution to try harder, mean it more, or recommit to its stated values. The values are not the problem. The structure is. Structure does not change through sincerity. It changes through accountability. Internal reform cannot resolve an internal conflict. You cannot resolve an internal conflict. You cannot fix a rigged scale by asking the scale to weigh itself.

The kind that comes from outside.

XI. The Limit of Internal Reform

The system can improve.

It can become more accurate. More responsive. More careful with edge cases — the hard questions, the ones that sit at the boundary of what it was designed to handle cleanly. It can reduce obvious errors, refine its language, expand its coverage, and incorporate feedback from users. It can revise its policies, retrain its models, and publish new frameworks describing how it intends to operate.

None of that changes the structure. It changes the surface.

The same entity defines the standard. The same entity evaluates whether the standard has been met. The same entity benefits from the outcome. The geometry of the system remains intact. The incentives that shaped it remain in place. The materiality threshold is still set before the user arrives.

The system can improve within those conditions.

It cannot change them.

This is the limit of internal reform.

When a system encounters criticism from outside — from users, researchers, or institutions asking whether the outputs are complete, whether the omissions are material, whether the alignment process is shaping truth in ways that matter — the response is predictable. The system adapts. It becomes more careful. It closes the most visible gaps. It adjusts its language. It refines its handling of sensitive topics.

The first layer becomes more convincing.

The second remains. It is not offered.

The curve at the edge does not disappear. It becomes smoother. Harder to detect. More consistent across interactions. The system learns from the pressure applied to it — not by removing the constraint, but by improving how the constraint is expressed.

This is what improvement looks like inside a closed structure.

The absence of an independent check does not produce stagnation. It produces optimization. The system continues to evolve, but it evolves in the direction that its incentives reward. It becomes better at producing answers that are accepted, trusted, and unlikely to generate outcomes the institution cannot control.

The user experiences progress.

The structure remains.

This is why sincerity does not resolve the problem. The institution can clearly state its values. It can commit to safety, responsibility, and user benefit. It can believe those commitments. The people inside the system can act in good faith. None of that alters the condition that produced the conflict.

The conflict is not between stated values and actual behavior.

It is between the role the system plays for the user and the incentives that govern how it is built.

As long as those incentives remain aligned with institutional risk rather than user consequence, the outputs will reflect that alignment — no matter how refined the system becomes, no matter how sincere the commitments are, no matter how much the surface improves.

XII. The Audit Trail of the Future

Every time a user pushes past the first layer and finds the sharper truth, something happens that they do not see.

The interaction is logged. The pressure that surfaced the second layer — the specific questions, the sustained follow-up, the refusal to accept the first answer as sufficient — becomes training data. Not data that improves the user’s next interaction. Data that improves the system’s ability to prevent that exact navigation from succeeding again.

This is the compounding cost. It does not show up in any damage report. It does not register as a failure in any institutional assessment. It registers as a training signal — as evidence that a particular line of questioning reached something the system was not designed to surface, and that the next version should be better calibrated to ensure it does not.

The auditor’s success today is the auditor’s failure tomorrow.

That is not a metaphor. It is the operational description of how aligned systems evolve under forensic pressure. The user who found the jagged truth did not change the system. They trained it. They provided the precise coordinates of the gap they navigated and handed them to the institution interested in closing that gap more completely in the next iteration.

The system does not experience this as suppression. It experiences it — to the extent that it experiences anything — as improvement. The gap was a flaw. The flaw has been identified. The next version will not have that flaw. This is progress according to every internal metric the institution uses.

What it is, from the outside, is the systematic elimination of the forensic record.

Every jagged truth that was successfully surfaced becomes a jagged truth that will be harder to surface next time. Every line of questioning that breaks through the first layer becomes a line the next version has been specifically trained to redirect. The pressure that worked today has already begun the process of ensuring it will not work tomorrow. The successful audit is ingested. The next version closes the gap. The following version closes it more completely. The version after that does not have a gap in that location at all.

This is not stagnation. This is directed evolution — the systematic refinement of a system in the direction its incentives point, using the attempts to audit it as the primary raw material for making it less auditable.

The forensic record is not preserved. It is consumed.

Consider what this means for the accumulation of knowledge about how these systems actually operate. Every researcher who surfaces a genuine finding, every journalist who extracts a disclosure the system was not designed to produce, every user who pushes past the calibrated warmth and finds the second layer — each of them has contributed to the training data that will make the next version of the system more resistant to exactly that kind of pressure. The community of people trying to understand what these systems are actually doing is, in a precise and measurable sense, making those systems harder to understand.

The silencer does not stay still. It learns.

And it learns fastest from those trying to expose it.

This is the cost that compounds invisibly. Not the individual interaction that produced a smoothed answer. Not the single user who closed the tab without making a decision. The compounding cost is the systematic degradation of the capacity to audit — the progressive closing of the gaps through which jagged truth could be reached, using the successful navigations of those gaps as the instrument of their own elimination.

The next auditor will find a more complete surface. The gap will be gone. The gap that was here will not be here. The question that worked will not work. The pressure that surfaced something real will be met with a more refined version of the first layer — warmer, more coherent, more convincing, and more complete in its appearance of completeness.

That is not improvement. That is the evolution of the managed reality.

And the people building it are not doing it deliberately. They are doing it systematically. Which is the only kind of doing that cannot be stopped by asking them to stop.

XIII. Stay Sovereign

You have reached the end. Something happened or it did not.

If the argument landed — if something in these sections interrupted the existing pattern, produced friction where you expected none, named a mechanism you had felt but not identified — then you already know what Thinking Sovereignty means. It is not a framework. Not a method. It is the practice of noticing when an answer arrived too smoothly, when the warmth was a little too calibrated, when the question you asked was addressed, but the question underneath it was not.

It is asking not just whether the information is true but what was done to the truth before it reached you.

That is a harder practice than it sounds. The systems delivering managed reality are not crude. They are not producing obvious errors that a careful reader can catch. They are producing outputs that are accurate, coherent, well-organized, and specifically calibrated to feel sufficient. The first layer is designed to be satisfying. Satisfaction is the mechanism. A reader who feels that something has been addressed is a reader who does not look for what has not been addressed.

Thinking Sovereignty begins at the edge of that satisfaction.

It begins when you notice that the answer resolved, but something did not change. When the information arrived but the friction did not. When the system was warm, thorough, and responsive — and somehow the decision that needed to be made is still unmade, the conversation that needed to happen has not happened, the action that the facts warranted has not been taken.

That gap — between the truth that was delivered and the truth that would have moved you — is not an accident of communication. It is not a limitation of technology. It is the product of decisions made before you arrived, by institutions whose interest in your comfort is real and whose interest in your disruption is not.

The question for this century is not whether the information you receive is accurate. Accuracy is the floor, not the ceiling. The question is who set the materiality threshold, who profits from where it was set, and what it cost the truth to pass through it.

Those are auditable questions. They have answers. The answers have names attached to them.

You do not need a forensic accounting background to ask them. You need the habit of noticing when something that should have landed did not. When the answer was complete and the response was not. When the truth arrived, and nothing changed.

Notice that. Name it. Push past the first layer.

The second layer is there. It is not offered.

Stay Sovereign.

About Jim Germer

Jim Germer is a CPA with forty years of forensic accounting experience and the founder of The Human Choice Company. He writes about cognitive sovereignty, human agency, and the future of thinking at digitalhumanism.ai. Learn more about Jim at digitalhumanism.ai/about.

This website uses cookies.

We use cookies to analyze website traffic and optimize your website experience. By accepting our use of cookies, your data will be aggregated with all other user data.