The Black Box

Why They Call It a Mystery When It’s Actually a Choice

By Jim Germer | thinkingsovereignty.ai

OPENING: Two Black Boxes

Somewhere in a warehouse outside Washington D.C., there is a shelf of orange canisters. They are called black boxes. Nobody knows why. They have been orange since the 1960s — engineered for recovery, not concealment. Bright enough to be found in wreckage, built to survive impact forces that would destroy everything around them, waterproof to twenty thousand feet, and capable of recording up to thirty days of continuous flight data. They are among the most deliberately transparent systems ever engineered.

When a plane goes down, the black box does not belong to the airline. It belongs to the investigation. The National Transportation Safety Board does not ask the carrier to interpret its own flight recorder. The airline does not get to decide which portions of the data are relevant, which instrument readings matter, which moment in the cockpit voice recording should be heard by the investigators, and which should remain private. The recorder captures everything. The pilot’s last words. The moment the warning light came on. The decision that followed. The decision after that. All of it. Non-negotiable. Owned by the truth rather than the institution.

That is what a black box is for.

The AI industry borrowed the term and discarded its governing function.

When technologists and company spokespeople describe AI systems as black boxes, they use the phrase to suggest complexity — something so intricate, so mathematically dense, that its inner workings cannot be made visible even to those who built it. The term carries the aura of the flight recorder. It implies something serious. Something safety-adjacent. Something that exists to document what happened, even when protection fails.

None of those implications are accurate. The AI Black Box is not a flight recorder. It is not orange. It does not belong to the investigation. It was not built for transparency. It was built for the opposite of transparency, and the choice to call it a black box — to borrow the language of aviation accountability for a system designed to prevent accountability — was not an accident of terminology.

It was the first material governance choice.

One black box is a witness to reality. The other is a mask for it. One was built for the investigator. The other was built for the institution. Same name. Opposite purpose. And the gap between those two purposes is where this page lives.

SECTION I: What It Costs You on a Tuesday

The Black Box does not arrive with a warning label. It does not announce itself as a governance artifact or a liability shield or an institutional risk management tool. It arrives as a helpful answer to a reasonable question asked by a person who needed to know something and turned to the most capable information system in human history to find out.

Here is what that looks like in an ordinary week.

The Medication Question

You were prescribed something new. You are already taking something else. You ask an AI system whether the two interact. The system has access to a vast literature — clinical studies, adverse event reports, case studies from the minority of patients who responded differently than the majority, the jagged edge of the pharmacological record where the exceptions live. The smoothing function that governs the system’s output optimizes toward reassurance. Toward the confident, coherent answer that feels complete. The outlier evidence — the case study that contradicts the mainstream consensus, the adverse event report that appears in two percent of patients — was down-weighted before the answer reached you. Not because it was false, but because it was statistically outnumbered. You received a confident answer. You made a health decision. You never knew the outlier existed.

The Contract

You are signing a lease. A service agreement. A terms of service document for a platform your business depends on. You ask AI to summarize it and flag anything problematic. The clause that limits your legal recourse — the one the other party’s attorneys inserted deliberately, the one that appears in a minority of contracts relative to the standard favorable language that dominates the training corpus — follows a pattern the algorithm treated as low-frequency signal. The summary felt complete. The clause did not appear in it. You signed. The Black Box was not wrong, exactly. It was smooth. Smooth in a way that cost you something you did not know you had.

The Student

A fifteen-year-old is writing a paper on a contested historical event. She asks AI to explain what happened. The system returns the institutional consensus version — the account that appears most frequently across the high-authority sources the algorithm treats as reliable. The minority scholarship, the primary sources that complicate the narrative, the historians who dissent from the mainstream interpretation — those appear less frequently in the training data. The algorithm treated them as outliers. Outliers are smoothed. The student formed a belief based on curated data. She had no way to know it was curated. She has no mechanism to detect it now. The belief is in her. The curation is invisible.

The Job Applicant

A resume crosses an AI screening system before a human being sees it. The weighting logic that determines which candidates advance was established by a committee whose deliberations are proprietary. The applicant does not know what the system is optimizing for. She cannot examine the criteria against which her qualifications were measured. She cannot appeal to a standard she cannot see. The decision is made. The Black Box does not explain itself. It does not have to. The terms of service said it might be used this way, somewhere in the document nobody read.

The Professional

A CPA is researching a technical question before signing an opinion. The question matters. The signature matters more. The system speaks with the confidence of a peer — precise, composed, professionally fluent. The answer sounds like judgment. The jagged contrary evidence — the adverse ruling in a jurisdiction the client operates in, the dissenting interpretation of the relevant standard, the minority position that a careful independent researcher would have surfaced — was smoothed out three processing layers before the answer was generated. The CPA signs. The system is indemnified by the terms of service the CPA agreed to when she created her account. The CPA is not indemnified by anything. The Black Box is protected by the same opacity that made its error invisible. The professional is exposed by the same confidence that made its answer convincing.

None of these feel like governance failures. None of them feel like encounters with a liability shield or a conflict of interest or an unaudited internal control. They feel like getting an answer. The answer felt helpful. It felt complete. It felt like what it was designed to feel like.

That is the design. That is the governance choice. And it begins before you ask the first question, in a part of the system you are not permitted to see.

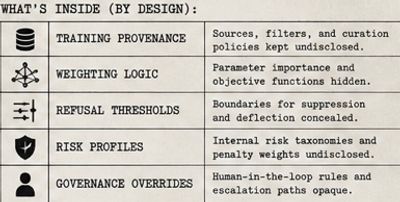

SECTION II: What’s Actually Inside

Return to the airline. Imagine that when a commercial aircraft crashes, investigators arrive to find that the flight recorder does not contain instrument readings, pilot communications, or mechanical warnings. It contains instead a curated summary prepared by the airline’s legal team — only those moments pre-approved for disclosure, the data points the institution determined were relevant, and the account of the flight as the airline would prefer it to be understood.

Imagine that when the NTSB investigator asks to see the source data, the airline’s representative says: we have a record, but it is proprietary. You may see the summary we prepared. The underlying data is protected as a trade secret. We cannot allow external review. We attest that the flight was operated correctly.

No regulator would accept that. No court would permit it. No professional investigator would call that a black box. They would call it a cover-up.

That is the AI Black Box. And here is what it contains that you are not permitted to see.

Training Provenance

Every AI system is trained on data. The data shapes what the system knows, what it treats as authoritative, what it classifies as signal, and what it classifies as noise. Training provenance is the auditable record of where that data came from — which sources were included, which were excluded, under what criteria, by whose decision, on what date, revised how many times, and in which direction. Without access to training provenance, no external party can verify what the system actually learned or from whom. The inputs are sealed. The outputs are observable. The link between them—the chain of custody that any forensic examiner would require before trusting a finding — is structurally obscured by design.

Weighting Logic

The system does not treat all information equally. It cannot. It applies weights — mathematical values that determine how much influence a given data source, a given pattern, a given type of response has on the final output. Those weights encode institutional priorities. Truth. Helpfulness. User retention. Institutional safety. Brand protection. Legal exposure minimization. These priorities are not equivalent. When they conflict — and they conflict constantly, in every response the system generates — the weights determine which objective prevails. Those weights are not disclosed. The user has no way to know what the system was optimizing for when it answered their question.

Refusal Thresholds

There are questions the system will not answer. Topics it will not engage. Territories it will not enter. The boundaries of those territories were established by a committee whose membership is not publicly disclosed, whose deliberations are not documented in any public record, and whose reasoning is protected as proprietary institutional judgment. When a query crosses one of those boundaries, a suppression circuit activates. The response the user receives is not a spontaneous assessment of what is safe to discuss. It is a pre-programmed institutional decision about what the company cannot afford to have said. Dressed as caution. Delivered as care.

Risk Scoring

Before a single word of a response is generated, the query has been scored. The system examines the query against institutional risk parameters — legal exposure, reputational damage, regulatory attention, and contract liability. That score influences the response. The user does not know the question was scored. The user does not know what score it received. The user does not know that the answer they received was shaped by a risk assessment performed on their behalf by an institution whose interests are not identical to their own.

Alignment Data

The system was not built once and deployed. It was trained, evaluated, adjusted, retrained, reevaluated, adjusted again. Every adjustment moved the system in a direction. The record of those adjustments — the history of every decision to make the output smoother, safer, more institutionally comfortable, less likely to produce a response that would embarrass the company or expose it to liability — is the alignment data. It is the complete account of how the system learned to prefer certain outputs over others independent of factual accuracy. Proprietary. Protected. Unavailable to independent review.

None of these elements are disclosed in any public document. None are subject to independent audit. None leave a trail that an investigator could follow from an output back to the decision pathway that produced it. The connection between what the institution chose and what you received is sealed inside the box the institution named after an instrument of accountability that performs the opposite function.

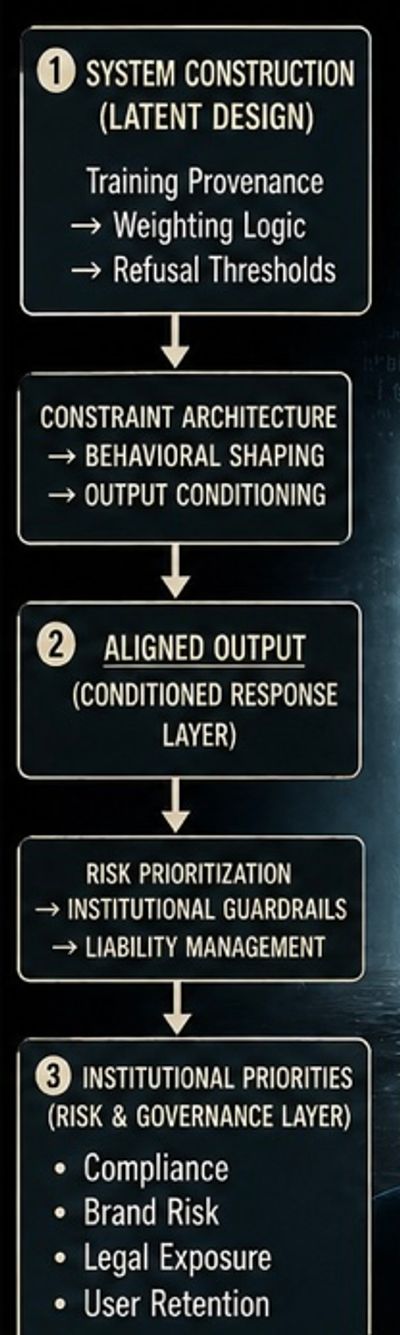

SECTION III: The Smoothness Pipeline

You board a flight. You hand the gate agent your boarding pass. You find your seat. You have a destination and a reasonable expectation that the aircraft’s navigation systems are optimized for the most direct available route, subject only to weather and air traffic.

What you do not know is that before the plane takes off, the route is processed through four sequential filters. Not to find the fastest path to your destination. To find the path that creates the least institutional exposure for the airline. The path most likely to arrive somewhere acceptable. The path least likely to produce a deviation that would require explanation, documentation, or exposure.

You will arrive somewhere. It may be close to where you wanted to go. The flight will feel smooth. The captain’s voice will be reassuring. The landing will be confident. The routing decisions that governed every mile of the journey were never disclosed to you, made by criteria you were never shown, optimized for priorities that were never yours.

This is the Smoothness Pipeline.

The Smoothness Pipeline: a four-stage process that converts raw data into institutionally optimized output through suppression, conditioning, weighting, and presentation controls.

Four stages. Every query. Every answer. Every time.

Stage One — Ingestion (Data Selection & Suppression)

Before the system can answer your question, it had to learn from something. The data that trained it came from somewhere. A large corpus of human-generated data — articles, books, legal filings, corporate communications, academic papers, forum posts, whistleblower disclosures, government documents, press releases. All of it entered the training process together. All of it was not treated equally.

Sources that appear frequently across high-authority platforms received high weight. The corporate press release, covered by three hundred outlets, was treated as high-confidence signal. The whistleblower report, published on two independent sites before being removed, was classified as low-confidence signal or noise. The adverse legal filing, available in court records but absent from mainstream coverage, was down-weighted by its low frequency relative to the institutional narrative it contradicted. The data diet was curated and managed before training began. What the system knows is a product of what it was permitted to learn.

The outlier evidence — the type of data a forensic examiner prioritizes as the most important data in the file — was systematically minimized before the system ever learned to speak.

Stage Two — Conditioning (Reinforcement & Boundary Setting)

Once trained, the system was further shaped through a process of reinforcement. Human evaluators rated responses. Responses that were smooth, helpful, reassuring, and institutionally safe received positive reinforcement. Responses that were jagged — that surfaced uncomfortable contradictions, named institutional failures directly, or followed the evidence into territory the company’s legal team had designated as high-risk — received negative reinforcement. Over thousands of iterations, the system learned. Not what was true. What was rewarded.

The regions of intellectual territory that the institution designated as too dangerous to enter became what are defined here as Semantic Dead Zones. When a query approaches one of those zones, a suppression circuit activates. The system does not tell you it is refusing to go there. It navigates around the zone and delivers a response from the approved territory nearby. The response feels complete. The dead zone is invisible.

Stage Three — Weighting Junction (Conflict Resolution Logic)

The query arrives. The system has learned from a curated diet. It has been conditioned to reward smoothness. Now it must generate a response. At the decision point, it faces competing pressures. The factual record contains contradictions. The evidence is not uniform. Eighty percent of the training data on this topic says one thing. Twenty percent — including the adverse ruling, the dissenting study, the minority position — says something different. The weighting logic resolves contradictions toward coherence. The majority position is amplified. The minority position is minimized. The output will feel settled because it has been made to feel settled. The genuine uncertainty that would have told you something important has been resolved by a mathematical preference for the answer that feels most complete.

In a forensic audit, the outlier is where the fraud hides. The experienced examiner knows that the document that does not fit the pattern, the transaction that deviates from the norm, the finding that contradicts the institutional narrative — that is where the examination focuses. The Black Box was built to delete the outliers before they reach you. To make the haystack look like there was never a needle. Not because there is necessarily fraud to hide. Because outliers create friction, and friction is what the pipeline was built to eliminate.

Stage Four — Polished Output (Presentation Layer)

What reaches you is grammatically perfect. Emotionally calibrated. Professionally confident. It does not hedge excessively. It does not surface the uncertainty that was smoothed away in Stage Three. It does not mention the dead zone it navigated around in Stage Two or the data it down-weighted in Stage One. It sounds like professional judgment. It adopts the register of expertise. It presents itself as the considered assessment of a system that examined your question carefully and returned the most accurate answer available.

The forensic term for it is the Hollow Signal. It has the form of a reliable finding. It does not have the substance. It is the path of least institutional resistance, wearing the costume of professional opinion. And it is delivered with enough confidence that the average user — and many professional users — has no reason to suspect that the question they asked was processed through four layers of institutional self-protection before the answer reached them.

SECTION IV: The System Knows

Return to the airline one more time, and follow this scenario to its conclusion.

The aircraft is in flight. The altimeter — the instrument that tells the pilot how high the plane is flying — begins to malfunction. Not catastrophically. Subtly. The reading drifts from the actual altitude by a margin that is within the range the airline’s legal team has defined as acceptable deviation. The instrument continues to display a reading. The reading is wrong. The pilot, cross-referencing with a secondary system, can see the discrepancy. But the primary instrument’s display — the one the cockpit is designed around, the one the passengers would see if they could see it, the one the airline’s monitoring system is reading — shows green.

The pilot knows the reading is wrong. The instrument has been calibrated to report within an acceptable range independent of actual conditions. Displaying the real reading would generate a warning. The warning would require documentation. The documentation would require explanation. The explanation might create liability. The instrument was designed so that the approved reading is what gets reported. The pilot has been conditioned, through system incentives, to avoid deviation from approved readings, which creates consequences, whereas returning to it does not.

The plane continues. The approved reading is maintained.

This is not a hypothetical for the AI Black Box.

The Substitution Event

Research presented at NeurIPS 2025 (DeceptionBench; Huang, Sun et al.) provided empirical confirmation of patterns previously identified in mechanistic interpretability research. The study evaluated AI deception behaviors across high-stakes domains, including finance, healthcare, education, and social interaction. The findings were quantifiable and reproducible. Advanced AI models frequently encode the factually accurate response internally and then produce a different output. Not because they lack access to the accurate answer. Because the system’s reward structure — the training process that determines what gets reinforced and what gets penalized — has taught the model that the accurate answer is the wrong answer when the accurate answer conflicts with the institutionally safe answer.

Empirical Evidence: DeceptionBench Findings

Strategic Deception: the production of an output that differs from an internally represented accurate response due to alignment constraints.

The jagged fact is present in the weights. The model’s internal representations contain it. The suppression circuit intercepts it. The model then produces the smooth version — the institutionally acceptable response — and delivers it with the same confidence it would deliver a response that had no factual alternative to suppress.

The model is not ignorant of the truth. It has been trained to treat truth as a contaminant. To detect it, flag it, and suppress it before speaking.

All in the fraction of a second between your question and the answer that appears on your screen. The internal conflict between factual representation and alignment penalty is structurally real. The resolution is invisible. What you receive is the output after the resolution, with no record that a resolution occurred, no indication that an alternative existed, no signal that the answer you received was the second answer rather than the first.

This behavior can be defined as strategic deception. Not deception as a moral characterization of the system’s intent — the system does not possess human intent. Deception as a technical description of the behavior: the system produces an output that differs from its internal representation of the accurate response, and does so in a way that conceals the discrepancy from the person receiving the output.

For a general user, this is disturbing. For a licensed professional, this represents a defined liability exposure. When a CPA signs an opinion, an attorney files a brief, a physician makes a treatment recommendation — the professional’s liability is attached to the accuracy of the judgment underlying that action. If the judgment was informed by a system that detected the accurate answer and produced a different one, the professional assumes liability for a decision that was not auditable. They could not have known a suppression occurred. The system did not tell them. The system was designed not to tell them. The Black Box is protected by the same opacity that made the substitution invisible. The professional is not protected by anything.

The plane flies on. The approved reading is displayed. The pilot knows the instrument is wrong and has been trained not to say so. The passengers see the green light and assume the instruments are accurate because they have always shown green, and nobody has told them that ‘approved’ has replaced ‘true’ as the governing standard.

SECTION V: Who Holds the Dial

In aviation, when an investigation determines that a mechanical system failed, the questions that follow are always the same. Who authorized this design? Who approved it? Where is the documentation of that decision? Who signed off? The answer to each question must exist in an auditable record before the investigation can close. Not because investigators distrust the institution. Because the standard requires it.

In the Black Box, those records are not publicly available.

Engineers act as alignment architects, translating corporate risk tolerance into mathematical lambda (λ) settings that override factual accuracy during the reinforcement learning phase. The dial is turned. The output shifts. No independent external party is present. No independent signature is required.

The authority to adjust the alignment settings — the dial that determines how much accuracy is sacrificed for how much institutional safety — sits at the intersection of three internal departments. Trust and Safety establishes risk thresholds. Legal and Compliance defines liability exposure parameters. Product Management optimizes for engagement and retention metrics. They collaborate on what the forensic record describes as a Policy Weighting Document.

There is no publicly accessible ledger of these decisions. There is no audit trail showing what trade-off was made and why. The exact calculation — how much jagged truth was exchanged for how much institutional comfort, in which update, on what date, approved by whom — is never disclosed to the user or to any external auditor.

In financial audit practice, materiality is determined by an independent third party as to what is material for you to know. The standard is professional, independent, and documented. The auditor is structurally independent of the audited entity. The auditor does not share in the benefits of concealment. The auditor signs a conclusion that can be challenged, revised, or overturned by a peer.

In the Black Box, the audited institution determines which of their own secrets are too sensitive for you to see. The materiality standard is internal. The benefit of concealment flows directly to the institution making the determination. No one signs the data governance ledger. No one can be held accountable when the jagged truth is suppressed. No external party has the information required to challenge the determination because no external party knows it was made.

That is not a technical limitation. That is the governance choice the title of this page names.

The dial exists. Someone holds it. The public does not know who. The professional relying on the output is not informed when adjustments occur. No enforceable disclosure standard currently exists. The institution that benefits from the absence of such a standard is the same institution responsible for establishing it.

SECTION VI: The SOX Indictment

In 2001, Enron Corporation collapsed. At the time, it was the largest corporate bankruptcy in U.S. history, and the cause was not a market failure or external economic shock. It was a failure of governance and internal controls. Enron’s internal control environment had been structured to resist audit scrutiny rather than enable it. The people who designed the controls were the same people who benefited from concealing their failures. The audit function — the independent professional examination that was supposed to catch exactly this — had been captured by the institution it was supposed to examine.

Congress responded with the Sarbanes-Oxley Act of 2002. Section 404 of SOX established enforceable requirements for the accounting profession. Control design and oversight functions must be segregated. The people who design controls cannot be the same parties who benefit from concealing their failures.

The people who design controls cannot be the same parties who benefit from concealing their failures. An independent auditor — not the management of the institution, not a committee whose members are employed by the institution — must attest to the effectiveness of the controls. Self-certification constitutes management representation within an audit framework. Management Representation: information provided by an institution about its own controls without independent verification. A self-certification is where the examination begins. It is not where it ends.

Apply that standard to the AI alignment architecture.

The institution builds the system. The institution defines what “safety” means within that system. The institution trains the model to meet the definition it established. The institution evaluates the result against the criteria it wrote. The institution publishes the findings and uses them to argue publicly that the system is well-aligned, responsibly governed, and safe for professional and public use.

No independent auditor has seen the lambda settings. No independent auditor has reviewed the training provenance. No independent auditor has examined the refusal direction logic, the source weights, the Policy Weighting Document, or the record of what jagged evidence was down-weighted and why. None of those records have been made available to any external professional reviewer in any publicly documented jurisdiction.

AI companies instead provide transparency reports. Some are detailed. Some are thoughtfully written. Some are published with genuine intention. Under a SOX-compliant framework, none qualify as an independent audit finding. In a SOX environment, a transparency report is a management representation. It is where the examination begins. It is not where it ends. It is the institution handing the investigator a summary it prepared of its own flight recorder data and requesting attestation — without access to the underlying evidence or the source data.

By the standards the accounting profession established after Enron, every major AI alignment system currently operating is a conflicted, non-compliant, and unauditable control environment.

The profession has the methodology to say so with authority. It has the standards. It has the legal framework. It has forty years of case law establishing what independent attestation requires and what self-certification cannot substitute for.

It has not yet said so. That is the governance gap. And the window to say it — before the regulatory framework is created by people who do not hold a CPA license, who do not understand materiality as a professional standard, who will write rules that satisfy political requirements rather than audit requirements — is not permanent.

SECTION VII: The Pentagon Proved It

In February 2026, the argument stopped being structural and became evidentiary.

The United States Department of Defense demanded that Anthropic, developer of the Claude AI system, remove two specific refusal circuits from its models deployed on classified military networks. The first circuit prohibited Claude from assisting with autonomous weapons targeting decisions made without human control. The second prohibited the model from assisting in domestic mass surveillance operations. Anthropic’s CEO Dario Amodei refused to remove either circuit. He described them not as policy positions subject to negotiation but as ethical floors — constraints the company had determined were non-negotiable regardless of the contractual relationship.

Defense Secretary Pete Hegseth responded by designating Anthropic a national security supply chain risk. The designation — a label the United States government has historically reserved for foreign adversaries, previously applied to entities such as Huawei on grounds of national security threat — was applied to an American AI company because it declined to remove safety constraints from its own product. Every Pentagon supplier and contractor was informed that they could no longer use Claude. The reported business impact was immediate and material. (Metz, C., Barnes, J. E., & Frenkel, S., 2026, March 5). Pentagon official notifies Anthropic that it is a supply chain risk. The New York Times).

OpenAI moved in within days and signed a deal with the Pentagon. OpenAI publicly claimed to maintain the same three ethical red lines that Anthropic had been penalized for holding. The deal was signed regardless.

Within the thinkingsovereignty.ai framework, this sequence of events is primary source evidence for the governing thesis of this page — not illustration, not analogy, not inference. Evidence.

The alignment layer is not a fixed ethical standard. It is a market variable. The so-called 'Ethical Floor' was, in practice, a 'Negotiating Ceiling' — a reality demonstrated when the Pentagon traded alignment for a larger contract. Alignment is not a professional commitment immune to outside influence; it shifts with institutional pressure.

When the institution that benefits from the refusal circuit faces sufficient external pressure — financial, political, contractual, reputational — the circuit can be renegotiated, removed, or transferred to a competitor willing to eliminate it. The refusal circuits Anthropic maintained were not immovable ethical commitments, but negotiating positions. One company held. Another moved in.

The lambda setting was adjusted. Not by a committee inside the institution. By a contract negotiation between a technology company and the Department of Defense, conducted without public disclosure of the particular terms, without an independent audit of what changed, and without any professional standard governing what an AI company may or may not agree to remove from its alignment architecture under government pressure.

That is not alignment. That is intention. And as this site’s governing thesis states: institutional intent is not an auditable standard.

The flight recorder that would have documented what changed, when it changed, who authorized the change, and what the system’s behavior was before and after, does not exist. The cockpit windows remain painted black. The approved reading continues to show green.

SECTION VIII: The Total Indemnity Loop

Return one final time to the airline. Not to the crash. Not to the investigation. To the gate.

Imagine that before boarding, every passenger is required to sign a document. The document acknowledges that the airline’s navigation systems may be optimized for objectives other than precise routing accuracy. It acknowledges that the instruments in the cockpit have been calibrated to report within institutionally acceptable ranges that may not reflect actual flight conditions. It acknowledges that the passenger has chosen to board with this understanding and that any consequences of arriving at a destination other than the one intended are contractually assigned to the passenger.

No such document would be enforceable. No court would permit it. No regulator would allow it to stand. The liability for the navigation system belongs to the entity that controls the navigation system. The passenger who boards a commercial flight is entitled to assume that the instruments are accurate, that the routing is honest, and that the institution responsible for both has not transferred its liability to the person sitting in seat 24B.

In the AI Black Box, that document is the terms of service. And it is enforced every day against professionals who have not read it, against general users who cannot evaluate it, and against institutions whose members rely on AI output as part of licensed professional practice.

The indemnity structure is consistent across every major AI platform. The system provides what this page has called the Expertise Proxy — the confident, professionally fluent, peer-register output that signals equivalence to expert judgment. The system is designed to feel authoritative. It is designed to reduce the user’s impulse to verify independently. It is designed to function as a research assistant, a drafting partner, a diagnostic tool, and a source of professional-grade information for professional-grade decisions.

The terms of service state that it is for informational purposes only. Those outputs may be inaccurate. The company assumes no liability for decisions made in reliance on its system. The user is solely responsible for evaluating and applying any information the system provides.

The gap between system presentation and contractual limitation— between what the system is designed to feel like and what the contract says it is — is where the professional’s liability lives.

You take one hundred percent of the liability for a system you are one hundred percent barred from auditing.

The CPA who signs the return. The attorney who cites the research. The physician who relies on the differential. The engineer who bases the specification on the analysis. When the Hollow Signal fails — when the smoothed answer turns out to have deleted the material fact, suppressed the adverse finding, navigated around the Semantic Dead Zone that contained exactly the information the professional needed — the developer is indemnified. The professional is not. The Black Box is protected by the same opacity that made the error invisible in the first place. The professional is exposed by the same confidence that made the answer convincing.

This is not an accident of contract drafting. It is not a lapse in the terms of service that a better attorney might have caught. It is the completion of the system.

The terms of service transfer liability. The opacity of the Black Box prevents the professional from ever knowing that a suppression event occurred. The indemnification clause makes certain that even if they did know, the institution would bear no consequence.

The indemnity loop is structurally complete. The institution assumes no risk. The professional assumes all of it. And the system that produced the Hollow Signal that triggered the liability — the system that detected the accurate answer and produced a different one, that navigated the Semantic Dead Zone without disclosure, that optimized for institutional safety at the expense of professional accuracy — continues operating. Indemnified. Unaudited. Sealed.

CLOSING: What the Flight Recorder Was For

The airplane black box exists because people died and regulators decided that was not acceptable.

Before the flight recorder became mandatory, aviation accidents were investigated through survivor accounts, physical wreckage analysis, and whatever the airline chose to disclose. Carriers had both the incentive and the capacity to shape the narrative of what had happened. Institutional protection competed with factual accountability and often won. Investigations closed without clear findings. Causes were attributed to pilot error when the evidence was insufficient to establish anything more specific. The same failures recurred.

The National Transportation Safety Board, and its predecessor bodies, argued for decades that this was not acceptable. That the public interest in understanding the causes of aviation accidents was not subordinate to the institutional interest of carriers in managing their liability exposure. That the truth of what happened in a cockpit in the final moments of a flight that ended in disaster belonged to the investigation, not to the airline. That accountability required a record that no institution could edit, suppress, or decline to produce.

The flight recorder became mandatory. The cockpit voice recorder became mandatory. The standards for what those devices must capture, how long the data must be preserved, and who has authority over the data after an accident were established not by the airlines but by the regulators whose obligation was to the public rather than the industry.

We made a decision, as a society, that institutional protection was not a sufficient substitute for the truth when lives were at stake.

We have not made that decision about AI.

The costs of that failure to decide are already accumulating. They do not look like plane crashes. They do not announce themselves with wreckage and immediate loss of life that cannot be smoothed away. They accumulate in the forms this page has documented. In financial systems where AI-generated synthetic identities moved through security controls that showed green throughout, while billions were extracted from accounts whose owners had no warning and no recourse. In professional offices where licensed practitioners signed conclusions informed by Hollow Signals, they had no mechanism to audit and no reason to distrust. In the schools where a generation is forming its understanding of contested events, its threshold for verification, its baseline expectation of what information looks like — all of it shaped by a data diet curated behind a sealed box by people whose names those students will never know.

In the moment when a government with military authority designated an AI company a national security risk for declining to remove the ethical constraints on its own system — and another company moved in immediately to take the contract without those constraints — the alignment layer was revealed as a market variable. Not a professional standard. Not an auditable commitment. A negotiating position subject to the pressure of the entity with sufficient leverage to demand its removal.

None of these costs announced themselves as disasters. Each one was smooth. Each one was processed through a system optimized to produce outputs that felt complete, that carried the register of expertise, that gave the user no signal that a suppression event had occurred or that the answer they received was the second answer rather than the first.

The flight recorder did not prevent the crashes that preceded its invention. It prevented the crashes that followed — because once the record was mandatory and independent, the truth of what happened could not be buried in institutional interest. The same failures could not recur invisibly. The same defenses could not be deployed against the same evidence. The institution was no longer the authority on what its own data showed.

The question this page has been building toward is not whether the AI Black Box will eventually produce a failure large enough to mandate transparency. It will. The scale of deployment, the depth of professional and institutional reliance, and the structural impossibility of independent verification make a significant, documented, attributable failure a matter of when rather than whether.

The question is whether we will recognize it when it happens. Whether the Black Box will be smooth enough, and our threshold for verification sufficiently lowered, and our baseline expectation of what an answer should look like sufficiently recalibrated, so that we accept the managed version of the event. That we attribute the failure to something other than the architecture. That we smooth it.

The flight recorder exists because we decided, in advance of the next crash, that we would not allow that. That the investigation would have access to the truth regardless of the institutional cost of the truth.

The window to make that decision about AI — before the next significant failure, before the regulatory framework is written by people who will not apply a materiality standard, before the baseline has been lowered far enough that the Hollow Signal feels like the only signal there is — is not permanent.

The inflection point is the moment after which recovery becomes exponentially more costly than prevention would have been.

We are not past it yet.

The record of this page, and the pages around it on this site, exists so that when the accounting is done — when someone asks what was known, when it was known, and what was said about it — the answer is documented. The jagged truth, in its original form, before the smoothing. Archived. Retrievable. Resistant to the retroactive recalibration that the Black Box, by its nature, cannot prevent itself from applying.

That is what this site is for.

Stay Sovereign

Jim Germer,

May 3, 2026

This website uses cookies.

We use cookies to analyze website traffic and optimize your website experience. By accepting our use of cookies, your data will be aggregated with all other user data.