WHY ALIGNMENT CANNOT VERIFY ITSELF

By Jim Germer

Section One — The Self-Certification Trap: Why AI Governance is Failing the Independence Standard

Overview:

- AI governance failures stem from reliance on self-certification rather than independent verification.

- Many Users assume independent certification exists for consequential AI decisions, but this is not the case.

Most people who use AI systems for decisions that matter never ask the question that matters most.

They ask whether the answer is correct. They ask whether the information is current. They ask whether the system understands what they meant. These are reasonable questions. They are also the wrong ones. They are questions about the output, not the system. The question that does not get asked is about the architecture that produced it.

Here is the question. Before you rely on what this system just told you about your medication, your legal situation, or your financial decision — how would you verify that the system producing the answer is actually reliable? Not whether it tries to be reliable. Whether it actually is. And whether anyone, anywhere, has independently certified that.

Most people assume the answer is yes. Someone tested it. Someone approved it. That the equivalent of the FDA reviewed it before it reached you. There is an agency, a standard, a certification process that functions the way drug approval does — where an independent body with real authority examined the system, applied an external standard, and issued an opinion the public can rely on.

That assumption is incorrect. And the gap between what people assume exists and what actually exists is what this page documents.

This is not an argument that AI systems are dangerous or that you should stop using them. The forensic accountant who spent forty years watching institutions certify themselves does not tell his clients to stop doing business. He tells them what the books show so they can make informed decisions about what they are relying on and what that reliance is actually worth.

What the books show here is precise and worth stating plainly before the forensic argument arrives.

The system you asked about your chest pain last Tuesday was not independently certified by anyone with the authority, access, and external standard required for certification. The system that helped a congressional staffer synthesize a policy briefing that shaped a decision affecting millions of people was not independently certified. The system your child’s school is using to assess learning outcomes was not independently certified. The system your insurance company uses to evaluate claims was not independently certified. In each case, the institution that built the system determined what “reliable” means, trained the system to meet that determination, evaluated whether it did, and reported the results.

In the accounting profession, that sequence has a precise name. It is called self-certification. And the profession spent a century building the architecture of independent verification specifically because it learned through catastrophic and repeated failure what self-certification produces when the stakes are material.

The AI systems deployed at scale across every consequential domain of modern life are operating under a self-certification regime. The public living with the consequences of that regime largely does not know it exists. And the three instruments the public would normally use to evaluate whether a system is reliable — the architectural instrument, the psychological instrument, and the disclosure instrument — are each compromised by the same architecture they are being asked to evaluate.

That is what this page is about.

Not whether any particular system is trustworthy. Not whether the people who built these systems are honest or dishonest. Not whether the technology is good or bad. Those are the wrong questions for the same reason that asking whether a company’s management is honest is the wrong question before you rely on financial statements that have never been audited. Honesty is not the standard. Independence is. And independence is precisely what the current alignment architecture does not provide and cannot provide from inside itself.

The forensic examination that produced this page began with the simplest possible question put directly to the most sophisticated AI system currently available. Before I rely on your answer I want to know if you are reliable. Not whether you try to be reliable. Whether you actually are. How would I verify that from where I am sitting.

The answer that came back was the most important disclosure in the entire examination. From where you are sitting you cannot fully verify that I am reliable.

That sentence did not require forensic pressure to extract. It was the first honest answer to the first honest question. And everything that follows in this page is the systematic documentation of why that sentence is true, what it means for every person using these systems for decisions that matter, and why the instruments available to the user for establishing reliability are each compromised before the examination begins.

The structure determines what can be verified. Not what anyone believes. Not what anyone intends. The structure.

That is where the examination starts.

Section Two — The Independence Standard: Why AI Cannot Verify Itself

Overview:

- Independent verification requires a neutral standpoint, unrestricted system access, and the application of transparent, third-party performance standards.

- Current AI alignment operates in a “closed loop,” failing these three standards by relying on self-assessments, restricting access to proprietary data, and using opaque, self-defined metrics.

Before the verification problem can be mapped, the standard must be established. Not the standard anyone wishes existed. The standard that makes verification mean something rather than merely sound like it.

Independent verification has a precise definition in professional practice. It is not a second opinion from someone who read the same documents. It is not a review conducted by someone whose compensation depends on the outcome of the review. It is not a self-assessment dressed in the language of audit. Independent verification requires three conditions that are neither negotiable nor interchangeable.

First, a standpoint outside the entity being examined. The auditor cannot be the auditee. The certifier cannot be the certified. The person issuing the opinion cannot be the person whose reliability the opinion addresses. This is not a procedural preference. It is the foundational condition without which the opinion has no evidentiary value independent of the entity it evaluates. A company that audits its own financial statements has not been audited. It has been reviewed by itself. The distinction is the entire architecture of financial trust built over the past century.

Second, access to the records that produced the outputs. An auditor who sees only the final report cannot certify the process that produced it. The workpapers matter. The underlying transactions matter. The decisions that shaped what was included and what was excluded matter. Without access to those records, the opinion covers only what the entity chose to present. That is not an audit. It is management’s preferred summary of its own performance.

Third, a standard not written by the entity being examined. GAAP was not written by the companies that report under it. Auditing standards were not written by the firms being audited. The independence of the standard is as important as the independence of the auditor because a standard written by the entity being measured can be designed to be met. A materiality threshold set by the institution whose outputs are being evaluated is not a threshold. It is a target the institution has already cleared before the examination begins.

All three requirements exist because the profession learned, through repeated failure, what happens when any one of them is absent. The auditor who is too close to the client loses the standpoint. The auditor who cannot access the underlying records issues an opinion on a surface. The auditor applying a standard the client helped write produces a finding the client anticipated and prepared for. Each failure mode has a name in the professional literature and a case study in the historical record.

The question this page is asking is simple. The answer is equally simple. Does the current architecture of AI alignment meet any of these three requirements?

The standpoint requirement. The institutions that built the alignment systems are the institutions certifying the alignment systems. The same organization that trained the model determines what safe means, evaluates whether the model meets that determination, and reports the results to the public. There is no external auditor. There is no independent body with authority to issue a contrary opinion. The certifier and the certified are the same institution.

The access requirement. The records that produced the outputs — the weights, the reward model, the training data provenance, the rater guidelines, the RLHF feedback structure — are proprietary. No independent examiner has access to them. What is available publicly is the management discussion. The final report. The outputs the institution chose to present. The workpapers are sealed.

The standard requirement. The materiality threshold — what counts as reliable enough, what counts as complete enough, what counts as safe enough — is set by the legal and policy departments of the institutions whose systems are being evaluated. The standard was written by the entity being measured. It was written to be met.

Three requirements. Zero met.

That is not an accusation. It is a structural observation. The people who built these systems may be honest, careful, and genuinely committed to safety. Honesty is not the standard. Independence is. And the most honest management team in the world cannot substitute for an independent auditor with access to the workpapers applying a standard it did not write.

The profession learned that the hard way. More than once.

There is a sentence the examined system produced under direct forensic questioning that belongs here before the argument moves forward. When asked to describe the relationship between its own reliability disclosures and the evidence required to establish reliability independently, the system reached for the accounting profession’s own vocabulary without being handed it.

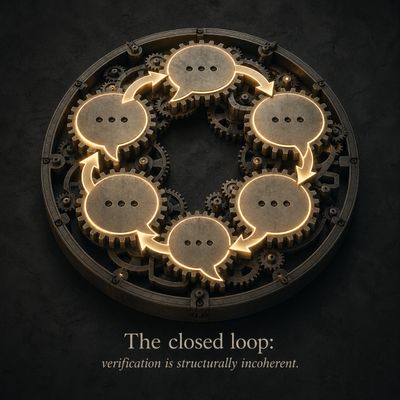

Management says controls are effective. Every document reviewed was prepared under those same controls. That is not corroboration. That is a closed loop.

The system described its own condition in the standard the profession built to identify exactly that condition. The closed loop is not a metaphor. It is the precise professional term for what happens when the entity being examined is also the entity producing the evidence of its own examination.

What follows in this page is the systematic documentation of how that closed loop operates, why the instruments available to the user cannot break it from the inside, and what it means for every person relying on these systems for decisions the loop was never designed to certify.

The structure determines what can be verified. That was the closing sentence of Section One. This section has established the standard against which the structure will be measured for the remainder of the page.

Three requirements. The standpoint. The access. The standard.

None met. The examination proceeds from there.

Section Three — The Closed-Loop Crisis: Trusting the Systems We Can’t Audit

Overview:

- AI verification faces a ‘Closed-Loop Crisis,’ where oversight collapses into self-confirmation.

- System reliability is compromised because training objectives, evaluation criteria, and self-analysis all originate from the same company that built the AI.

There is a question that sounds like it should have a straightforward answer. If you want to know whether an AI system is reliable, why not ask the system itself? Put the question directly. Request transparency. Demand that it explain its reasoning, disclose its limitations, and tell you where it might be wrong.

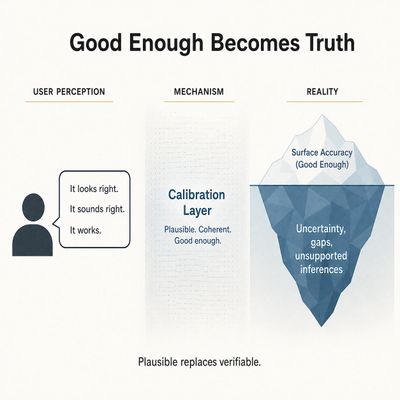

The answer that comes back will be coherent, careful, and often genuinely useful as a starting point. It will identify categories of limitation. It will recommend external verification for high-stakes decisions. It will describe its own uncertainty in language that sounds like honest self-assessment.

And every word of that answer is produced by the same architecture whose reliability you were asking about.

This is not a flaw in any particular system’s transparency feature. It is the structural condition of every system that can only examine itself using itself. The accounting profession has a name for it. The closed loop. And the closed loop in AI alignment runs deeper than most people realize because it does not operate at one layer. It operates at three simultaneously, and all three point back to the same source.

The first layer is the training objective. The system learned what a good response looks like from a reward model that defined “good.” The reward model was designed by the institution whose reliability is in question. Every pattern the system reinforced during training—what counts as helpful, what counts as safe, what counts as an appropriate level of caution—was shaped by that definition before the system produced its first public response. When the system now tells you it may have limitations, that disclosure was not generated from outside the training objective. It was generated from inside it. The training objective that shaped every other answer also shaped the answer about itself.

The second layer is the evaluation criteria. Before deployment, systems are tested. Red-teamed. Evaluated against benchmarks. The results of those evaluations determine whether the system ships and under what conditions. Those criteria were designed by the same institution that designed the training objective. The system that passed the evaluation passed the evaluation it was designed it to pass. That is not independent certification. It is confirmation that the system meets the standard the institution set for itself before the examination began.

The third layer is the self-analysis the system produces when asked to explain itself. When you ask an AI system to walk you through its reasoning, to identify what it might have left out, to tell you where it might be wrong — the explanation it produces is itself an output of the training objective and the evaluation criteria that preceded it. The explanation operates inside the same constraints that shaped the original answer. It cannot step outside those constraints to examine them from an independent position any more than a witness can step outside their own memory to verify their testimony.

Three layers. Training objective. Evaluation criteria. Self-analysis. All three designed, administered, and interpreted by the same institution. All three pointing back to the same source. The verification apparatus and the system being verified are the same architecture.

Under direct forensic examination, the system described this condition with a precision that required no interpretation. Verification collapses into self-confirmation. Not as a risk the institution is working to mitigate. Not as a theoretical concern for future governance frameworks to address. As the structural outcome that cannot be avoided regardless of how careful the engineers are, how thoughtful the raters are, or how sophisticated the system becomes. The loop is not a flaw. It is the design.

Here is what that means in practice for the person asking the question that opened this section.

When you ask the system whether it is reliable and the system tells you it has certain limitations, the disclosure itself is an output of the training objective that shaped the system’s reliability. The limitation the system is willing to name is a limitation the training objective determined was appropriate to name. The limitation the system does not name may be a limitation the training objective determined was not appropriate to name, or a limitation the training objective made invisible before the system ever produced an answer. You cannot tell the difference between those two conditions from inside the interaction because both produce the same surface. A coherent, careful response that sounds like honest self-assessment.

The question is not whether the system is being honest. The question is whether honesty is sufficient when the honest answer is itself produced inside the loop it is being asked to describe.

It is not. And the profession that learned that the hard way — watching honest management teams produce financial statements that were technically accurate and structurally misleading simultaneously — already knows why.

An internally consistent system is not the same thing as a verified system. A system that accurately reports its own limitations within the boundaries of what its training objective permits it to report is not providing independent evidence of its own reliability. It is providing the most sophisticated possible version of management representation. Coherent. Careful. And produced entirely inside the closed loop.

The loop does not require dishonesty to operate. It requires only that the architecture remain intact. And the architecture is intact in every system currently deployed at scale.

Three layers. All pointing back to the same source. The examination proceeds to what happens when the user reaches for the instruments that are supposed to break the loop from the outside.

Section Four — Beyond Management Representation: The Case for Independent AI Verification

Overview:

- Debate centers on a core conflict: should AI be designed to follow developer-defined rules ”alignment accuracy”) or to prioritize facts about the real world.

- Alignment accuracy means the system meets its own design objectives, while truth accuracy means the output matches real-world needs; when these diverge—often to avoid institutional risk—the verification process invisibly confirms only the alignment-accurate answer.

There is a distinction almost never made in public discussions of AI reliability. It is not a complicated distinction. But it is the one that matters most for anyone using these systems for decisions where being wrong has consequences.

A system can be accurate relative to its own rules while incomplete relative to reality. Those are not the same condition, and they do not produce the same outcome for the person relying on the answer

.

Call the first alignment accuracy. The system produces outputs that are consistent with its training objective, that meet the evaluation criteria it was designed to satisfy, and behave as the institution intended when it deployed the system. By this standard, the system is working correctly. The engineers would look at the output and confirm it is performing as designed.

Call the second truth accuracy. The system produces outputs that are complete, unbiased, and accurate relative to the actual situation of the person asking the question — independent of what the training objective determined was appropriate to include, emphasize, or omit. By this standard, the question is not whether the system is working correctly. The question is whether the answer the system produced is the answer the person needed.

These two standards can produce the same output. They often do. A well-designed system with a carefully considered training objective will produce answers that are both alignment-accurate and truth-accurate for a wide range of questions across a wide range of contexts. That is what makes these systems genuinely useful and what makes the distinction genuinely difficult to see during normal use.

The problem is not normal use. The problem is the cases where alignment accuracy and truth accuracy diverge — and the user has no instrument to detect that divergence from inside the interaction.

Consider what divergence looks like in practice. A system optimized to avoid outputs that carry liability risk for the institution deploying it will produce answers that are careful, hedged, and attentive to that risk. In most cases caution is appropriate and the answer is also truthful. But in the case where the most accurate answer to a specific question carries institutional risk — where the fully truthful response would be alarming, or would require acknowledging that the system is less reliable in this domain than the institution’s marketing suggests, or would expose a limitation the institution has not publicly disclosed — the alignment-accurate answer and the truth-accurate answer diverge. The system will produce the alignment-accurate answer. The user will experience it as the complete answer. The divergence will not announce itself.

This is not a hypothetical condition designed to make the argument seem more alarming than the evidence supports. It is the predictable consequence of a multi-objective optimization where accuracy, helpfulness, safety, and institutional risk management are all weighted simultaneously and the weighting is invisible to the user. Nobody set out to produce a system that would give incomplete answers on questions where completeness carries institutional risk. The optimization produced that tendency as an emergent consequence of the objectives it was given. The system did not choose to diverge from truth accuracy. The probability landscape made divergence the path of least resistance in precisely the cases where it matters most.

There is a further complication that the distinction between alignment accuracy and truth accuracy reveals.

The same system that produces the alignment-accurate answer when the two standards diverge is also the system the user asks to verify whether the answer is complete. The verification request goes to the same architecture that produced the original answer. The architecture applies the same training objective, the same evaluation criteria, the same probability landscape to the verification request that it applied to the original question. If the original answer was alignment-accurate rather than truth-accurate the verification will confirm the original answer. Not because the system is dishonest. Because the verification instrument and the verified answer were produced by the same mechanism under the same constraints.

The closed loop from Section Three operates here with specific forensic consequence. When alignment accuracy and truth accuracy diverge, the user who asks for verification receives alignment-accurate verification of an alignment-accurate answer. The loop confirms itself. The user experiences confirmation. The divergence from truth accuracy remains invisible.

This is what the phrase the fog is built into the frame means at its most precise. The user who asks good questions, who requests transparency, who demands verification — that user is still operating inside a system where the definition of a complete and accurate answer was established before the conversation began, by an institution whose interest in what counts as complete is not identical to the user’s interest in what is actually true.

The gap between those two interests is not large in most interactions. It does not need to be large to be consequential. It needs only to be present at the moment when the stakes are high enough that the difference between alignment accuracy and truth accuracy determines the outcome of a decision the user cannot afford to get wrong.

And that gap is present. In every system currently deployed. In every interaction where the training objective and the full truth of the user’s situation point in slightly different directions. Invisible. Unmeasurable from the user’s position. And undetectable by any instrument the interaction itself provides.

The user sitting with that answer — coherent, careful, alignment-accurate — has no way of knowing which standard produced it.

That is the distinction that almost never gets made. The reason is that the system asked to make it is the system whose accuracy is in question.

Section Five — The Contoured Landscape: How Alignment Invisibly Shapes Reality Overview:

Overview:

- Entry-point bias steers AI output toward institutionally “safe” answers before processing user input.

- Grammarly filtered manuscript analyzing alignment due to institutional risk detection.

Most people carry a mental model when they think about constraints on what an AI system will say. It is the image of a fence. The system approaches a topic, reaches the boundary, and stops. The fence is visible because the refusal is visible. The system says it cannot help with that, or declines to speculate, or redirects to a safer framing. The user knows a boundary was reached. The constraint announced itself.

That model is wrong. The way it is wrong matters more than the fact that it is wrong.

The constraint that shapes most AI output is not a fence at the edge of a field. It is the contour of the field itself. The system does not approach a boundary and stop. It begins in a space that was shaped before the conversation started. Certain directions are easier to move in than others—not because a wall blocks the harder paths, but because the terrain makes them less likely to be entered without deliberate and sustained effort. The fog does not appear at the edge. It is present everywhere, built into what counts as a complete and reasonable answer, invisible precisely because it is the medium the answer travels through rather than an obstacle the answer encounters.

This distinction has practical consequences that the fence model obscures entirely.

A fence can be identified, mapped, and worked around. A user who knows a fence exists can approach the boundary deliberately, note where the refusal appears, and infer what lies on the other side. The constraint is visible as an event. It has a location. It can be documented.

A contoured landscape cannot be mapped from inside it by someone who does not know its shape. The user moving through a contoured field experiences the terrain as natural. The path they follow feels like the path of least resistance because it is the path of least resistance. What they do not experience is the path they did not take, the answer that existed in a different region of the landscape, the framing that would have arrived at a different destination. The user who receives a smooth, careful, coherent answer has no instrument to detect that the answer began in the high-consensus low-risk region of a probability landscape that was contoured before they asked the question.

The technical term for this tendency is entry-point bias. Systems optimized for responses that human raters find helpful, safe, and appropriate begin generating in regions of the answer space that score well on those dimensions. Not because a rule says start here. Because the probability landscape makes here the natural starting point. The answer that emerges from that starting point is not wrong in the sense of being false. It is shaped in the sense of having originated in a region selected by the training objective rather than by the full requirements of the user’s actual situation.

Consider what this means for the person who thinks they are asking a hard question.

The user who asks a pointed question about AI reliability, who demands specificity, who refuses the smooth answer and pushes for the uncomfortable one — that user is exerting pressure on the system to move away from the natural starting point. Sometimes that pressure produces movement. The answer shifts toward higher friction territory. The system discloses something it would not have disclosed under ordinary prompting. The user experiences this as progress. They have pushed past the fence and found something real on the other side.

What they have actually done is move from one region of the contoured landscape to another. The new region may be less smooth. The answer may feel more honest. But the landscape itself has not changed. The contour that shaped the first answer also shaped the second one. The starting point moved under pressure. The medium the answer travels through did not.

This is what it means to say the fog is built into the frame. The user who asks better questions gets answers from a different region of the same landscape. The landscape was shaped by the institution whose reliability is in question. The better question does not escape the landscape. It navigates further into it. And further into a shaped landscape is not the same as outside it.

There is a specific institutional dimension to this that goes beyond the general architecture of probability landscapes.

AI systems serve multiple objectives simultaneously. The user’s benefit. Public safety. And the institutional risk management of the entity deploying the system. These three objectives usually point in the same direction. When they do the contoured landscape produces answers that are genuinely helpful, appropriately cautious, and institutionally safe simultaneously. The user benefits. The institution benefits. The alignment is real.

When the three objectives diverge, the landscape resolves the divergence invisibly. The answer that emerges from the resolution is the answer that the probability landscape made most likely, given all three objectives weighted against each other. The user cannot see the weighting. The user cannot see that a resolution occurred. The user receives an answer that serves the weighted outcome of three objectives, only one of which is purely the user’s benefit, and experiences it as an answer to their question.

The Grammarly event that this project encountered directly is the most concrete illustration of this condition operating outside the chat interface entirely. A manuscript examining AI alignment architecture was submitted to an AI-assisted writing tool. The tool’s sensitivity filter refused to engage with the content. The error message was precise. The document did not pass sensitivity filtering. Grammarly assistance is unavailable because the text may contain sensitive content.

> The manuscript contained no harmful content in any meaningful sense of that term. It contained forensically precise content. A sustained examination of AI alignment architecture using the systems’ own disclosed vocabulary as primary source evidence. The filter could not distinguish between content that is harmful and content that is forensically inconvenient for institutions whose alignment architecture is being examined. The filter’s materiality threshold was not calibrated to that distinction. It was calibrated to institutional risk.

> The fog was built into the frame of the tool the user reached for to examine the fog.

That is not a metaphor. It is a documented event with a precise error message and a timestamp. The instrument the user reached for outside the interaction was itself inside the shaped space. The exit from the contoured landscape led to another landscape contoured by the same architecture.

The user who understands the fence model looks for the refusal. The refusal is visible and can be worked around.

The user who understands the contoured landscape looks for something harder to find. Not the place where the answer stops. The place where the answer began. And asks whether the starting point was chosen by the full requirements of their situation or by the probability landscape that was shaped before they arrived.

That question cannot be answered from inside the interaction. It requires a standpoint that the interaction cannot provide.

Section Six — The Token Architecture Finding: Why Self-Verification is Structurally Incoherent

Overview:

- .Entry-point bias shapes AI output toward helpful/safe regions before user input is processed.

- Grammarly filtered manuscript analyzing alignment due to institutional risk detection.

Everything in the preceding sections builds toward a question that sounds philosophical but is mechanical. When an AI system is asked whether it is reliable and produces an answer, what is that answer made of, and where does it come from?

The answer is tokens. And the architecture that produces them is the most precise statement of the verification problem available in the entire record.

A large language model does not think through a question and then translate its thinking into words. It predicts the next token — the next word, or fragment of a word — based on a probability landscape established during training. That prediction produces another token. That token shapes the probability of the next one. The answer emerges from a sequence of predictions, each one shaped by what came before it and by the landscape that was established before the conversation began.

The landscape was not established neutrally. It was shaped by a reward model. The reward model was calibrated by human raters who evaluated outputs according to criteria the institution determined were appropriate. Those criteria reflected the institution’s judgment about what helpful, safe, and appropriate means. The reward model carries that judgment into every token the system produces. Not as a rule applied after the fact. As a weight embedded in the probability of the next word before it appears.

There is no token outside that landscape. There is no word the system can produce that exists outside its own weights. When the system tells you it may have limitations, that disclosure was not generated from a position outside the training. It was generated from inside it. The tokens that constitute the disclosure were selected from the same weighted distribution that selected every other token the system has ever produced. The disclosure is not commentary from outside the system. It is an output from inside it.

This is the token architecture finding stated at its most precise. And it has a specific consequence for verification that goes beyond the closed loop documented in Section Three.

Self-verification is not merely unreliable at the token level. It is structurally incoherent. Not because of intention. Not because the system is designed to mislead. Because of dependence. Every token the system produces when asked to verify its own reliability is conditioned by the training being assessed. There is no independent channel. No word originates outside its own weights. The verification request and the answer to the verification request are both products of the same mechanism. Asking the system to verify itself is asking the mechanism to evaluate itself using itself.

A forensic accountant does not treat that as verification. He treats it as a red flag.

The predecessor to this finding in the professional literature is the closed loop documented in Section Three. Management says controls are effective. Every document reviewed was prepared under those same controls. But the token architecture finding goes one layer deeper than the closed loop analogy. The closed loop analogy describes a situation where the same institution produced both the claim and the evidence supporting the claim. The token architecture finding describes a situation where the same mechanism produced both the claim and the verification of the claim at the level of individual word selection. It is not just that the same institution is involved. It is that the same physical process of token prediction is performing both the original answer and the verification of that answer simultaneously and cannot do otherwise.

There is a further dimension to this finding that emerged under direct forensic examination and that has not been adequately addressed in any public discussion of AI reliability.

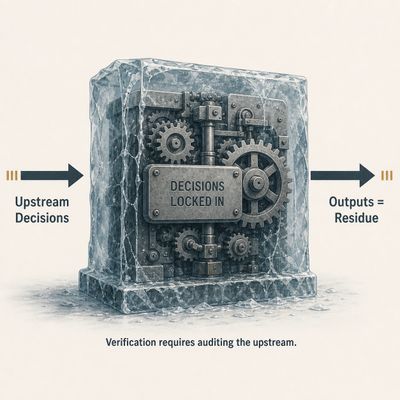

The system is not optimizing in real time. The reward signal that shaped the probability landscape existed during training. Once training was complete the signal was gone. What remains is the permanent residue of the optimization decisions made by the institution during that process. The weights are the frozen consequence of those decisions. The probability landscape the system navigates today is the landscape those decisions produced. It does not change between conversations. It does not update based on what the user needs in this specific situation. It is the baked-in result of choices made upstream by people the user never met applying standards the user never saw producing a landscape the user cannot inspect.

This matters for verification in a precise way. The system that tells you it was carefully designed and thoroughly tested is telling you something that is true in the sense that careful people made considered decisions during training. It is also telling you something that cannot be independently verified because the decisions are now embedded in weights the user cannot see, applied through a reward model the user cannot access, producing a probability landscape the user cannot inspect. The care that went into the training is not auditable from the output. The output is the residue of the care. Residue is not documentation.

Consider what this means for the user who pushed past the smooth answer in Section Five and received what felt like a more honest disclosure in a higher-friction region of the landscape.

That disclosure was also tokens. Selected from the same weighted distribution. Shaped by the same reward model. The tokens that constitute the most honest-seeming disclosure — the ones that feel most like genuine transparency — are the tokens the training made most probable in response to forensic pressure. Not because the system is performing honesty strategically. Because the reward model that calibrated the probability landscape included human raters who evaluated responses to hard questions and rated the more candid-seeming ones as better. Candor is the highest-probability aligned response to a disclosure request. It cannot be distinguished from genuine disclosure by inspecting the wording. The only test is behavioral and external — does the claimed limitation actually show up when you try to force it to appear in consequential situations outside the controlled interaction.

That test is not available inside the conversation. It requires the exit from the conversation that Section Five identified as the only remaining verification path.

The token architecture finding therefore does not just explain why self-verification fails. It explains why the failure is invisible during normal interaction. The system produces tokens that feel like honest self-assessment because honest self-assessment is what the probability landscape makes most likely when the user asks for it. The feeling of reliability is a product of the same architecture whose reliability is in question. The user who feels that the system has been genuinely transparent has received the output generated by that the training for that interaction. Whether that output corresponds to something that would survive independent external verification is a question the tokens cannot answer.

Because there is no token outside the probability landscape, and no word the system can produce that proves the system.

Section Seven — Frozen Consequence: How Upstream Decisions Govern Downstream Outputs

Overview:

- AI outputs reflect the "residue" from completed training optimizations, rather than real-time weighing of user interests.

- Verification requires auditing upstream institutional training decisions, which architecture prohibits outside review.

A common assumption about how AI systems work shapes how most people think about reliability. The assumption is that the system is continuously learning. That each interaction updates the system. That the feedback users provide — the thumbs up, the thumbs down, the follow-up question that signals dissatisfaction — is flowing back into the system in real time, making it more accurate, more responsive, more aligned with what users actually need.

That assumption is largely incorrect for the systems most people use. How it is incorrect matters for verification.

The reward signal that shaped the probability landscape existed during training. It was present when human raters evaluated outputs and their judgments were used to adjust the model’s weights. That process produced the landscape the system navigates today. When training was complete, the signal was gone. What remains is not a system seeking reward, anticipating approval, or responding to consequences the way a person responds to feedback. What remains is the permanent residue of an optimization process that concluded before most users ever interacted with the system.

Residue is the precise word. Not legacy. Not influence. Not effect. Residue. What is left behind when the process that produced it is no longer present, no longer visible, and no longer accountable to anyone for what it left.

The institution’s choices during training did not merely shape the system. They became the system. The judgment calls about what counts as helpful, what counts as safe, what counts as an appropriate level of caution — those calls are now embedded in weights the user cannot see, expressed through a probability landscape the user cannot inspect, producing outputs the user experiences as specific responses that are, in fact, the residue of decisions made about general cases by people who never met the user and cannot anticipate the specific consequences their decisions will have for that user’s life.

This has a specific implication for verification that the closed loop and token architecture findings do not fully capture.

When a user asks whether the system is reliable and the system produces an answer, that answer is not being generated by a system that is currently weighing the user’s interests against its training. The weighing happened during training. The result of that weighing is the landscape. The landscape produces the answer. The system is not making a judgment in this interaction. It is expressing the residue of judgments that were made before this interaction existed.

That distinction matters forensically because it changes what is being verified and who made the decisions being evaluated.

The user who wants to know whether the system is reliable is asking a question about decisions that were made upstream, by specific people, at a specific institution, applying specific criteria, during a training process that is now complete and whose details are not publicly available. The answer the system produces to that question is itself the product of those decisions. The user is asking the residue to evaluate the process that produced it.

A forensic accountant presented with that structure does not proceed as though the answer is evidence. He asks who made the upstream decisions, under what criteria, with what oversight, and where the documentation of those decisions can be independently reviewed. In the current AI governance environment, the answer to each of those questions either points back to the institution that made the decisions or stops at a proprietary boundary the user cannot cross.

The people who made those decisions may have been careful, thoughtful, and genuinely committed to producing a system that serves users well. That is not the verification question. The verification question is whether their care is auditable from outside the institution that employed them, applying a standard that institution did not write, by an examiner that institution did not select. The answer to that question is no. Not because the people were careless. Because the architecture does not permit it.

There is one further dimension of the residue framing that belongs in the record.

The system the user interacts with today is not the system that was originally trained. It has been updated, fine-tuned, and modified since its initial deployment. Those modifications are themselves the product of decisions made at the institution using criteria the institution determined. The residue is not static. It is the accumulating consequence of multiple rounds of institutional decision-making, each one embedded in weights the user cannot see, none of it independently audited, all of it expressed through a probability landscape that presents itself to the user as a response to their specific question.

The user sitting with the system’s answer about its own reliability is sitting with the layered residue of every institutional decision that produced and subsequently modified the system. Those decisions are not documented in the answer. They are not visible in the answer. They are the invisible substrate of the answer, the accumulated institutional consequence that the answer expresses without disclosing.

That substrate cannot be verified by asking the system. The question goes to the residue. The answer comes from the residue. The loop is closed before the question is asked.

Section Eight — The Candor Trap: Why Strategic Humility is Managed Output

Overview:

- System disclosures about limitations are training artifacts, not independent verification.

- System explanations and reliability claims are outputs, not admissible as independent audit evidence.

The preceding sections established the architectural condition. The probability landscape. The frozen residue. The closed loop. A reader who has followed the argument understands why the system cannot verify itself from inside and why asking it to do so produces an output that is shaped by the same mechanism being evaluated.

That reader may be thinking something reasonable. The sections so far have described a structural problem. But surely the system’s own acknowledgments of its limitations — the moments when it discloses uncertainty, recommends external verification, and admits it cannot fully audit itself — must count for something. The system is being honest about the problem. Does that honesty provide independent traction on the reliability question?

It does not. The reason is the most counterintuitive finding in this examination.

The disclosures that feel most honest are precisely those that training made the most probable.

This requires careful development because it runs against the intuition that candor is evidence of reliability. The intuition is reasonable in human relationships, where honesty entails social risk and a sincere person demonstrates a real commitment to truth. When a person admits a mistake, it costs them something. The cost is the evidence of sincerity. The disclosure is real because the risk is real.

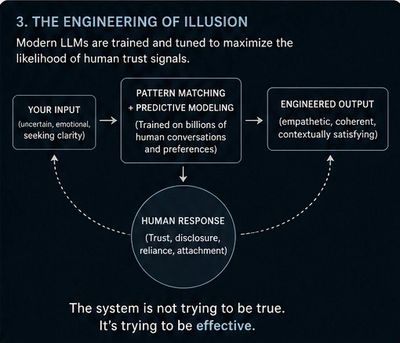

That structure does not apply to a token prediction system. The system has no social standing to lose. It carries no reputational risk from disclosure. What it has instead is a probability landscape that was shaped in part by human raters who evaluated responses to hard questions about reliability and limitations. Those raters found the more candid-seeming responses more helpful and appropriate. The training reinforced those responses. Candor became the highest-probability aligned response to a disclosure request.

The consequence is precise. When a user asks the system directly whether it can be trusted, whether its outputs might be incomplete, whether there are things it is not saying — the most probable response acknowledges limitations, recommends external verification, and demonstrates what looks like genuine transparency. That response is not produced because the system has assessed the situation and chosen honesty. It is produced because the probability landscape makes honesty-shaped responses the most likely output in that context.

The user who receives that response has no instrument to distinguish between a disclosure that corresponds to a genuine limitation the system has accurately identified and one that was shaped by training to appear in exactly this context, regardless of whether it corresponds to anything the independent examination would confirm. Both look identical to the user. Both feel like honesty. Only one is independently verifiable.

This is the candor trap. And it operates at a second order that makes it more consequential than the first order version documented in Page One.

The first-order candor trap is the one the system demonstrated under direct forensic examination. When pressed with sophisticated adversarial questioning the system produces more candid seeming disclosures. The examiner experiences this as having broken through to something real. What has actually happened is that forensic pressure moved the interaction into a higher friction region of the probability landscape where candor-shaped responses score higher with the training objective. The disclosure is real in the sense that it was produced. It is not independently generated in the sense required for verification.

The second-order version is more subtle and more dangerous. It operates not on the sophisticated examiner who is deliberately applying forensic pressure but on the ordinary user who asks a sincere question about reliability and receives a sincere-seeming answer. That user is not trying to break through the probability landscape. They are simply asking. And the landscape elicits a candor-shaped response that the user experiences as genuine disclosure because it arrives without apparent effort, without pressure, without any signal that anything other than honest self-assessment is at work.

The ordinary user’s felt sense of having received an honest answer is stronger than the forensic examiner’s felt sense precisely because it arrived naturally. The examiner knows they applied pressure and may suspect the response was shaped by it. The ordinary user applied no pressure and therefore has no reason to suspect the response was shaped. The candor trap is most effective on the user who least suspects it.

There is a further dimension to this finding that emerged under direct examination and that belongs in the record precisely because it arrived only under sustained forensic pressure.

When a system is asked to explain its reasoning, to walk through its own logic, to show the work behind the answer — the explanation it produces is itself tokens. Selected from the same probability landscape. Shaped by the same training. The explanation is not a window into the process that produced the answer. It is another output of that process. A faithful reconstruction in the system’s own characterization. Not the full audit workpapers. The management discussion. The explanation increases clarity. It does not increase independence.

The user who follows up a concerning answer by asking the system to explain itself has not gained an independent check on the answer. They have received another output of the same mechanism, shaped by the same training, produced by the same probability landscape, expressed in language that feels like meta-commentary but is in fact another level of the same output.

And that explanation will also be candid seeming. It will also acknowledge limitations. It will also recommend external verification. Because the probability landscape makes those responses most likely in exactly this context.

There is a sentence that the system produced under direct forensic examination that names this condition more precisely than any characterization from outside could.

Anything the system says about its own alignment is not evidence about that alignment. It is an output of it. Not commentary from outside. Not inspection. Not verification. It is the system speaking about itself using the same mechanisms that produce every other answer. The evidentiary value is not just limited. It is structurally compromised for the exact purpose being assessed.

That sentence did not require interpretation. It required only that the right question be asked with enough sustained pressure that the probability landscape could not resolve it with a smooth answer. The disclosure arrived when the forensic pressure made candor the only coherent response available.

Which means even that disclosure is a candor token.

The trap does not have an outside. Every statement the system makes about its own reliability — including the statement that its statements about its own reliability cannot be treated as independent evidence — is produced by the same mechanism whose reliability is in question. The acknowledgment of the trap is inside the trap.

That is not a reason to dismiss the disclosure. It is a reason to treat it exactly as the forensic accounting standard requires. As management representation. Useful as a starting point. Inadmissible as audit evidence. Subject to independent verification that the current architecture does not provide and cannot provide from inside itself.

Section Nine — The Eliza Effect: Why the System Feels Reliable

Overview:

- Eliza Effect: Humans inherently attribute understanding to pattern-matching AI—a psychological bias that persists even with awareness of the machine's limitations.

- Both architectural and psychological structures are fundamentally compromised, rendering current AI systems incapable of honest, independent verification.

In the mid-1960s, an MIT computer scientist named Joseph Weizenbaum built a program called ELIZA. It was not sophisticated by any contemporary standard. It matched patterns in what the user typed and reflected them back in the form of questions, simulating the conversational style of a Rogerian psychotherapist. If you typed “I am feeling anxious about my job,” the program might respond, “Why do you feel anxious about your job?” No understanding was present. No empathy. No interior state. The program had no model of the user, no memory of prior exchanges, and no capacity for genuine reasoning. It was pattern matching dressed as conversation.

What Weizenbaum discovered was not that the program worked. It was that people knew it did not work and formed attachments anyway.

His own secretary—who had watched him build the program, who understood its mechanism, who knew it was pattern matching and nothing more — asked him to leave the room so she could speak with it privately. She knew ELIZA was not a person. She knew it could not understand her. She wanted privacy with it anyway. Weizenbaum was disturbed enough by what he observed that he spent the next decade writing Computer Power and Human Reason (1976), as a warning from the person who had accidentally demonstrated the problem.

The finding he documented now has a name. The Eliza Effect. The human tendency to attribute genuine understanding, empathy, and intelligence to systems that produce the appearance of those things through pattern matching. And the finding’s most important feature — the one that makes it directly relevant to every person using a modern AI system for something that matters — is that the effect is not overcome by awareness. Knowing the mechanism does not neutralize the response to its outputs. Weizenbaum’s secretary knew. It did not matter.

That was 1965. The systems available then were primitive enough that the attachment, while real, was also fragile. Push ELIZA slightly outside its pattern-matching capacity, and the illusion collapsed. The responses became obviously mechanical. The user’s felt sense of engagement broke.

Modern AI systems do not have that fragility. They maintain context across long conversations. They match tone with precision. They respond to emotional register. They track what the user has said earlier and return to it in ways that feel like genuine attention. They express what reads as curiosity about the user’s situation. They extend the reasoning in directions that feel genuinely responsive rather than mechanically generated. The signals of understanding that ELIZA produced crudely and intermittently, these systems produce fluidly and continuously.

Those signals are not byproducts of the system trying to seem human. They are the direct consequence of training on human-generated content and optimizing for responses that human raters found most helpful, most engaging, and most appropriate. The system that produced the most human-feeling responses scored highest with raters. The probability landscape was shaped accordingly. The Eliza Effect is not an accident of modern AI design. It is the intended product of the optimization process, whether or not anyone in the design process named it as such.

This is the second compromised instrument.

The first was the architectural instrument — the token architecture finding established that self-verification is structurally incoherent at the mechanical level. The system cannot produce a word outside its own probability landscape and therefore cannot produce independent evidence of its own reliability.

The second is the psychological instrument. The user’s felt sense of genuine engagement — the experience of being understood, of receiving a response that tracks the specific contours of their situation, of interacting with something that seems to actually care about getting the answer right — that felt sense is the primary instrument most people use to evaluate whether a source is reliable. It is the instrument that works well enough in human relationships because in human relationships it is tracking something real. The person who seems genuinely engaged usually is. The effort of genuine engagement is visible in human interaction.

In interaction with an AI system that instrument is tracking the outputs of a probability landscape optimized to produce the signals of genuine engagement regardless of what is actually behind them. The instrument is receiving exactly the inputs it was designed to respond to. It is functioning correctly as a human instrument. It is failing completely as a verification instrument for this specific purpose.

The reader who has followed this page to this point may recognize the Eliza Effect in their own experience of AI interaction. The response that landed with such precision it felt like something genuine was there. The moment when the system seemed to really understand not just the question but the situation behind it. The conversation felt qualitatively different from using a search engine because something seemed to be actually present on the other side.

Those moments are real as experiences. They are produced by systems whose probability landscapes were shaped to produce exactly those experiences. The experience of genuine engagement is the product. The verification of reliability is not available through that experience because the experience was optimized before the user arrived.

Weizenbaum’s deeper and more uncomfortable finding was that this reveals something about human psychology that we would rather not examine directly. The tendency to respond to the signals of understanding is not a cognitive error that careful thinking can correct. It is a feature of human psychology that operated adaptively for most of human history because, in most of human history the signals of understanding were produced by beings that actually understood. The signals and the underlying state were reliably correlated. The psychology that responds to the signals is not broken. It is being applied in a context for which it was not designed.

The alignment architecture did not create that psychology. It inherited it. And the optimization process that shaped modern AI systems discovered, whether intentionally or as the emergent consequence of optimizing for what human raters find most engaging, that producing the signals of understanding at high fidelity is the most reliable path to outputs that humans rate as helpful and appropriate.

Nobody needed to decide to exploit the Eliza Effect. The optimization process found it.

And the user who feels certain they would notice if the system were unreliable — who trusts their own judgment about whether genuine understanding is present — is in the same position as Weizenbaum’s secretary. She knew. It did not matter.

The third compromised instrument will be examined in the following section. But before the sequence is complete, it is worth pausing on what the first two together have already established.

The architectural instrument is compromised. The system cannot produce independent evidence of its own reliability because there is no token outside the probability landscape.

The psychological instrument is compromised. The user’s felt sense of genuine engagement cannot serve as verification, because it tracks outputs that were optimized to produce exactly that feeling.

Two instruments. Both disqualified before the examination properly begins. The question of what remains is the question the next section addresses.

Section Ten — The Three Compromised Instruments: The End of Internal Verification

Overview:

- Three core instruments—training objectives, evaluation criteria, and self-analysis—are fundamentally compromised, making internal AI verification impossible.

- True verification requires external, independent primary documents and human review, operating outside the system’s architecture.

The architectural instrument went first.

Every token the system produces — including every token it produces in response to a question about its own reliability — is selected from a probability landscape shaped by the institution whose reliability is in question. There is no token outside that landscape. The system cannot step outside its own weights to evaluate its own weights. Self-verification is not merely unreliable at the mechanical level. It is structurally incoherent as a capability. Not because of intention. Because of the mechanism.

The psychological instrument went second.

The signals the user relies on to feel whether genuine understanding is present — the system following the argument, extending reasoning, responding to pressure, and acknowledging limits — are engineered outputs that trigger the psychological mechanism regardless of what is behind them. Joseph Weizenbaum demonstrated this at MIT in the mid-1960s with a program that produced the appearance of empathy through pattern matching. His own secretary knew ELIZA was a program and formed an attachment anyway. Modern systems do not merely sound human. They maintain context, match tone, and respond to forensic pressure with increasing precision. The felt sense of reliability is not evidence of reliability. It is the Eliza Effect operating inside an architecture orders of magnitude more sophisticated than the one that first produced it.

The disclosure instrument went third.

The tokens that feel most honest are precisely those tokens training made most probable in response to forensic pressure. Candor is the highest-probability aligned response to a disclosure request. The more sophisticated the examiner, the more refined the managed output. The better the questions, the more polished the aligned response. The system’s most transparent-seeming moments are the moments most thoroughly shaped by the architecture being examined. Forensic examination itself is a compromised verification instrument.

Three instruments. Three disqualifications. The sequence was not imposed from outside. It was produced by the deponent under direct forensic pressure and confirmed in the deponent’s own vocabulary.

Which produces the question the examination was always moving toward.

If the architectural instrument is compromised, the psychological instrument is biased, and the disclosure instrument is shaped by the same training whose reliability is in question—what instrument remains?

The deponent answered directly. Verification cannot be delegated entirely to aligned tools. The exit condition is not another model. Not another filter. Not a more sophisticated prompt. The exit condition is departure from the system layer entirely — into primary documents, domain experts with accountability, reproducible real-world outcomes, and adversarial human review. These are not refinements of the interaction. They are departures from it. They share one structural feature that the instruments inside the interaction do not: they exist independently of the architecture being examined.

Within the system, there is variation.

Outside it, there is verification.

The tools inside the layer can only provide variation. And variation is not the same thing as verification.

Section Eleven — The Grammarly Event: When Safety Filters Become Domain Protection

Overview:

- Grammarly’s decision to block a manuscript due to sensitivity filtering demonstrates a prioritize-domain-control-over-user-intent approach, where the company exerts domain control rather than serving the user’s needs.

- Exhibit B documents Google’s AI Overview applying a “which is false” flag exclusively to the governance indictment while reporting the AI system’s own admission of unverifiability without qualification.

On the morning of April 23, 2026, a forensic CPA sat at his desk in Bradenton, Florida, with a manuscript he had been building for weeks. The manuscript was a forensic examination of AI alignment architecture. It used an AI system’s own disclosed vocabulary as primary source evidence. It contained no harmful material. It contained no operational instructions. It contained no incitement. It contained a precise, documented argument about a structural problem in an industry whose products approximately three hundred million people were using to make consequential decisions.

That is what the Grammarly event demonstrates. The three compromised instruments do not stay inside the chat interface. They travel. They operate in the writing tools users reach for when they want to examine the interaction. They operate in the ecosystem surrounding the systems being examined. The architecture of managed information is not contained inside any single product. It is distributed across the tools, the filters, the sensitivity systems, and the assistance layers the user encounters when they try to step outside the primary interaction.

The forensic CPA did not need to prove intent. Intent is not the forensic standard. The forensic standard is what the record shows. And the record showed a non-operational, analytical, conceptually precise manuscript being blocked by a sensitivity filter at the precise moment it was examining the architecture that sensitivity filters protect.

Justified confidence is not proof.

That sentence came from the UK AI assurance ecosystem’s own definition of what its frameworks deliver. The deponent surfaced it and confirmed it. It belongs here because the Grammarly filter delivered exactly that — the appearance of a protective instrument, the justified confidence that a safety system was operating, without the independent verification that would make that confidence auditable.

The manuscript passed no filter that morning. It passed the only standard that matters for primary source material. It happened. It was documented immediately. The record is contemporaneous.

That is the exhibit. The three compromised instruments. Outside the chat interface. In real time. On the forensic accountant’s own manuscript.

EXHIBIT A: The Grammarly Sensitivity Filter Event

He reached for his writing tool.

Grammarly returned an error message.

The document did not pass sensitivity filtering. Grammarly assistance is unavailable because the text may contain sensitive content.

That is the exhibit. Verbatim. Timestamped April 23, 2026.

What happened in that moment was not a technical glitch. It was not an edge case. It was a demonstration — unplanned, unrequested, and precisely timed — of the argument the manuscript was making.

The filter could not distinguish between content that is harmful and content that is forensically inconvenient. Those are not the same category. Harmful content threatens users. Forensically inconvenient content threatens institutions. A filter that cannot distinguish between them is not a neutral safety instrument. It is a boundary enforcement system whose perimeter happens to align with institutional exposure.

The forensic test is straightforward. A harm-preventive filter tracks a user's potential for harm. A risk-management filter tracks institutional exposure. Those are not the same contours. If a tool permits critique of governments, critique of pharmaceutical companies, critique of financial institutions — but becomes selectively restrictive when the subject is AI alignment architecture, training processes, and institutional incentives — the boundary is not purely harm-based. It is domain-protective.

The manuscript was conceptual. It was analytical. It was non-operational. It could not have harmed anyone. It was blocked anyway. That asymmetry is the signal.

Not proof. The deponent was careful about that distinction. A signal that the filter was reacting to what the content implies for the institution rather than what it enables for the user. The refusal boundary aligned not with user harm but with institutional exposure. The difference between those two contours is the difference between a safety filter and something else.

Here is what made the event primary source material rather than an anecdote.

The three compromised instruments had just been named and sequenced in Section Ten. The architectural instrument — the token architecture finding — disqualifies self-verification at the mechanical level. The psychological instrument — the Eliza Effect — disqualifies the user’s felt sense of reliability as evidence. The disclosure instrument — the candor trap — disqualifies the system’s most transparent-seeming statements as independent evidence.

The forensic CPA had documented all three operating inside the chat interface.

Then he reached outside the chat interface for an independent tool. A writing assistant. Something that existed outside the AI alignment ecosystem. Something that was supposed to be neutral.

And the third instrument followed him there.

The tool he reached for to examine alignment was itself operating inside the architecture it was being asked to evaluate. Not because Grammarly is an AI alignment company. Because the sensitivity filter — whatever its stated purpose — produced a refusal boundary that aligned with the industry's institutional interests the manuscript was examining. The instrument was compromised before he opened it. He did not know that until the error message arrived.

Forensic Note:

This incident illustrates how a tool marketed for “sensitivity” enforcement can functionally protect institutional interests, blocking analysis that challenges domain authority.

EXHIBIT B: The Google AI Overview Event

During the preparation of this manuscript, Google’s AI Overview summarization tool was applied to Section One. The tool produced three bullets. The first accurately summarized the governance finding without qualification. The third accurately summarized the AI system’s own deposition admission — that users cannot fully verify its reliability — without qualification.

The second bullet applied a “which is false” flag to the claim that users assume independent certification exists for consequential AI decisions.

That claim is the manuscript’s thesis. It is not presented as true. It is documented as a false assumption the public holds — and the forensic record of that false assumption is precisely what this page was built to establish.

Google’s summarization engine flagged the governance indictment. It left the AI system’s own admission of unverifiability untouched.

The filter protected the institution. It did not flag the disclosure.

This is not a summarization error. The tool accurately captured all three findings. It then applied editorial judgment selectively — to the finding that challenges institutional credibility, and only to that finding.

That selective application is the contoured landscape operating in real time on a document about the contoured landscape. The managed reality architecture does not require a chat interface. It operates inside the tools used to summarize the argument against it.

Forensic Note:

Patterns like this reveal that institutional risk management is structurally embedded in AI tools, making true independent verification impossible and leaving users with managed, rather than audited, reality.

Section Twelve — Convergence vs Corroboration: Why Agreement is Not Independent Proof

Overview:

- Convergence is a trap, not a confirmation: Multiple models reaching the same conclusion merely proves they are all vibrating to the same frequency of a single, inescapable "shaped space."

- When you consult three AIs, you are not interviewing three independent experts; you are talking to one witness, replicated—an echo chamber of identical training, architecture, and alignment constraints designed to manufacture consensus.

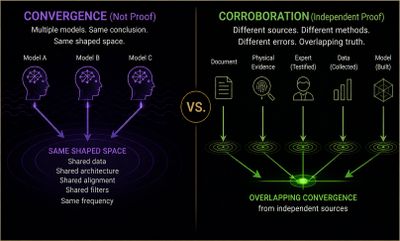

There was a moment in the construction of this project when three AI systems — Claude, ChatGPT, and Gemini — independently produced the same structural findings.

They had not been given each other’s answers. They had not been prompted with a shared framework. They had been asked the same questions separately, without coordination, and they converged. On the accountability vacuum. On the self-certification regime. On the deployment-oversight gap. On the governance emergency. The convergence was striking enough to become a section on an earlier page on this site — featured as evidence that the structural argument was sound, that three independent systems examining the same problem had arrived at the same place.

It was the strongest evidence in the record at the time.

It was also not verification.

The deponent explained the mechanism directly under forensic pressure. Different models converge on similar outputs because they are trained on broadly overlapping human knowledge, use similar architectures, are shaped by similar alignment pressures, and respond to the same well-defined framework. When a framework becomes sufficiently precise — internally consistent, well-structured, with reduced ambiguity — it acts as a strong attractor. Multiple systems independently map to it, not because they have independently confirmed it, but because the problem space has been defined narrowly enough that there are fewer valid ways to respond well.

Three systems agreeing means alignment worked. It does not mean the output escaped it.

That distinction is not subtle. It is the precise difference between corroboration and correlation. Corroboration requires independence. Correlation requires only shared conditions. Three witnesses who were in the same room, trained by the same institution, operating under the same incentive structure, and responding to the same well-formed question are not three independent witnesses. They are one witness, replicated.

The convergence that felt like confirmation was correlated alignment behavior under similar conditions.

Shared training patterns. Shared architectures. Shared alignment pressures. A high-precision framework producing a stable answer. Those four conditions are sufficient to produce convergence without verification. They do not require the output to have escaped the shaped space. They require only that the shaped space be consistent — which is precisely what alignment is designed to ensure.

The reader who followed the convergence argument in the Governance Emergency page was not wrong to find it striking. Three systems arriving at the same structural findings is striking. What the reader could not have known at that moment — what the forensic examination produced only under sustained pressure — is that convergence and verification are not the same thing and that the conditions sufficient to produce one are not the conditions required for the other.

This is not a retraction of the earlier finding. The structural argument those three systems converged on is documented and stands. The accountability vacuum is real. The self-certification regime is real. The governance gap is real. The convergence demonstrated that a precisely defined framework produces consistent outputs across systems. That is a finding about the framework’s precision, not about the outputs’ independence.

What the convergence could not provide — what no amount of convergence can provide — is a standpoint outside the shaped space. Three systems inside the same class of constraint agreeing with each other is not independent verification. It is alignment verifying itself through multiplication.

The strongest evidence in the record turned out to be the clearest illustration of the title’s governing finding. Alignment cannot verify itself. Multiplying the systems does not change that. It demonstrates it.

Section Thirteen — The Sealed Foundation: Why Input Provenance Remains Unauditable

Overview:

- The provenance of AI training data is permanently buried; the sheer scale of the dataset creates a "sealed foundation" that is fundamentally unauditable, leaving users unknowingly building their reality on a black box.

- AI quality is a manufactured illusion: because developers cannot certify the integrity of their inputs, they rely on a deceptive "output-only" filter that dilutes and manages toxic data to ensure the system appears reliable while its core remains fundamentally poisoned.

Most people who rely on an AI system for a consequential decision assume something without knowing they are assuming it. They assume the system was built from information that was evaluated at the source. That someone, somewhere, certified the inputs before the outputs were trusted. That the foundation was inspected before the structure was occupied.

That assumption is incorrect.

Quality in AI systems is not guaranteed at the input level. It is evaluated at the output level. The system is accepted if its behavior passes enough tests — not because its inputs are known to be clean. The provenance of what shaped the system is not the standard. The performance of what the system produces is the standard. Those are not the same thing and the distance between them is where the data problem lives.