Who Governs AI? The People Who Profit From It

By Jim Germer

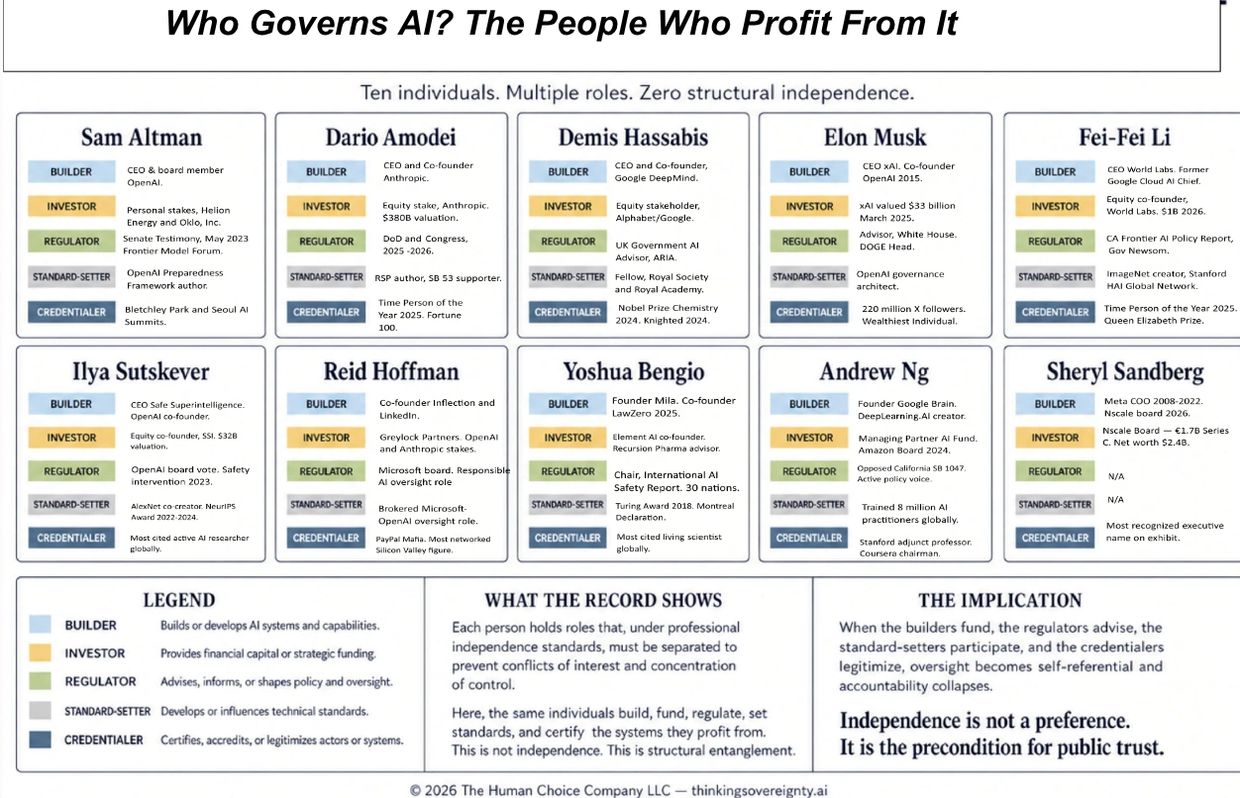

The people who govern AI are the people who profit from it. That is not a conspiracy. It is a documented institutional configuration — and it is shaping decisions that will affect every person who works, learns, borrows, applies for insurance, seeks medical care, or interacts with any institution that has adopted AI as part of its operations. That is not a future scenario. It is happening now. The same ten individuals who build the most powerful AI systems in history simultaneously fund them, regulate them, write the safety frameworks that govern them, and appear at the summits that certify them as responsible. No independent body reviews their decisions. No external audit architecture equivalent to those used in finance, medicine, or aviation currently exists. No enforceable professional independence standard currently governs these overlapping institutional roles.

The little guy — the patient, the borrower, the job applicant, the student, the citizen — has no seat at this table. He never did. The exhibit is the table. These are the people sitting at it. Every role shown is publicly documented. The overlap itself is the finding. What you are looking at is not a failure of governance. It is the replacement of governance with something that has no name yet — but that a forensic accountant would recognize immediately as self-certification. In every other consequential industry, independence standards emerged precisely because self-certification repeatedly failed the public.

.

WHAT THE RECORD SHOWS

The profiles below document a structural concentration of overlapping roles that independence standards in other regulated industries were specifically designed to prevent.

Sam Altman

Sam Altman is the most completely documented independence violation on the exhibit. As CEO and board member of OpenAI, he directs the development of the most widely deployed AI systems on earth. He simultaneously holds personal financial stakes in Helion Energy and Oklo Inc. — AI-adjacent companies whose commercial success depends on the same energy infrastructure that powers the systems he builds. He testified before the United States Senate Judiciary Committee in May 2023 about the oversight of AI systems he profits from. He co-founded the Frontier Model Forum — the primary industry self-governance body — while leading the industry’s largest company. In April 2025 he resigned as chairman of Oklo Inc., citing a conflict of interest with his OpenAI role.

That resignation is the most important single fact in his record. He identified his own conflict, named it publicly, and resolved half of it. The other half — the Senate testimony, the Frontier Model Forum, the standard-setting, the credentialing — remains intact. He did not resolve the configuration. He pruned one branch of it.

Dario Amodei

Dario Amodei co-founded Anthropic and holds an equity stake in a company valued at $380 billion as of February 2026. He participates in Department of Defense contracting, congressional policy conversations, and California AI legislation simultaneously. He authored Anthropic’s Responsible Scaling Policy — an internally administered safety framework that influenced both OpenAI and Google DeepMind to adopt similar structures within months of its publication. He supported California SB 53, the first state-level AI transparency law, while leading the company that SB 53 regulates. In February 2026, he refused a Pentagon request to remove contractual prohibitions on Claude’s use for autonomous weapons targeting and domestic mass surveillance.

That refusal is a private individual exercising a governance veto over a federal weapons request — with no public mandate, no congressional authorization, and no independent oversight of the decision. He has said publicly that these decisions are too big for any one person. He is the one person making them.

Demis Hassabis

Demis Hassabis co-founded DeepMind in 2010, sold it to Google in 2014, and now leads Google DeepMind as CEO — directing the development of the Gemini model series and some of the most consequential AI research produced anywhere on earth. His 2024 Nobel Prize in Chemistry for AlphaFold protein structure prediction made him the most decorated active AI builder alive — simultaneously elevating his institutional legitimacy and authority status to a level no other figure on this exhibit has achieved. He advises the UK Government Office for Artificial Intelligence and participated in both the Bletchley Park and Seoul AI Safety Summits. Sebastian Mallaby’s biography documents something the public record alone does not fully capture.

When DeepMind was sold to Google, Hassabis and his co-founder Mustafa Suleyman extracted a promise that the technology would never be used for weapons or surveillance. By 2025, Google was actively pursuing national security AI contracts. With their faith in governance mechanisms shattered, both men concluded that their best remaining tool was their own influence within the institutions they led. The governance mechanism failed. Personal judgment filled the vacuum. That substitution is the structural argument of the entire exhibit stated in one biographical fact.

Elon Musk

Elon Musk is the most financially concentrated entry on the exhibit and the most structurally complex. He co-founded OpenAI in 2015 as a nonprofit safety organization. He departed the board in 2018. He founded xAI in 2023 as a direct competitor in the frontier AI market. He is currently suing OpenAI in active federal litigation, alleging it abandoned its nonprofit safety mission — while building a for-profit system with no equivalent independent safety oversight. He signed a public letter in March 2023 calling for a six-month pause in AI development. He was secretly building xAI at the same time. He became Senior Advisor to the President and de facto head of the Department of Government Efficiency in January 2025. DOGE subsequently dismantled the Consumer Financial Protection Bureau — the regulatory body that was preparing to regulate X Money, his financial platform on X. X Money then launched. A Senate Banking Committee letter dated April 2026 formally asked whether DOGE employees accessed confidential competitor data during the dismantling. That question has not been publicly answered.

The United States Department of Justice intervened on behalf of xAI in a Colorado state regulation case in April 2026 — the federal government defending a private AI company in state regulatory proceedings while that company’s founder holds a Senior Advisor role in the same federal government. This is not a conflict of interest. It is a conflict of interest at a scale that has never existed before in any regulated industry. The man who says AI needs more oversight dismantled the agency that was about to oversee his own AI platform. If that was coincidence, what would it look like if it wasn't?

Fei-Fei Li

Fei-Fei Li created ImageNet in 2009 — a database of fifteen million labeled photographs that became the training foundation for virtually every modern computer vision system. Without ImageNet there is no facial recognition, no self-driving car vision system, no medical imaging AI. She did not participate in the AI revolution. She provided one of its foundational building blocks. She is now CEO and co-founder of World Labs — a spatial intelligence AI startup valued at over one billion dollars in 2026. At Governor Newsom’s request, she co-authored the California Frontier AI Policy Report in 2024 — a document whose recommendations are currently under active consideration for California legislation. She wrote the governance framework for an industry in which she simultaneously holds a billion-dollar equity stake. In any other regulated profession that sentence ends a career. In AI it produces a Time Person of the Year designation. She received that designation in 2025. The Queen Elizabeth Prize for Engineering followed.

Her Credentialer status — the ability to transfer institutional legitimacy to any governance process she participates in — is among the highest of anyone on this exhibit. She is the most complete illustration of why Credentialer belongs in the taxonomy. Institutional reputation increasingly substitutes for the independent verification mechanisms that do not yet fully exist.

Ilya Sutskever

Ilya Sutskever is the only person on this exhibit who used the available governance mechanism, watched it fail in real time, and left to build something different. He co-founded OpenAI in 2015 and served as Chief Scientist — contributing foundational research to GPT-2, GPT-3, ChatGPT, and the o1 reasoning models. In November 2023 he authored a fifty-two page memo accusing Sam Altman of lying, manipulating executives, and fostering internal division. He cast a deciding vote to remove Altman as CEO. Altman was reinstated within five days. The board was reconstituted. Every member who voted against Altman eventually left OpenAI. Sutskever departed in May 2024 and founded Safe Superintelligence — a company whose entire stated architecture is designed to resist the commercial pressure that produces the interlocking network this exhibit documents. SSI has raised three billion dollars from Andreessen Horowitz, Sequoia, Alphabet, and NVIDIA — all investors with documented financial interests in the broader AI ecosystem they are funding SSI to resist. The auditor who left to build an independent audit firm — funded by the clients of the firms he left. Whether the structure holds under that capital pressure is the live forensic question. His presence on this exhibit is not primarily about his current role configuration. It is about what his November 2023 board vote proved. The governance mechanism existed on paper. It did not survive contact with commercial reality.

Reid Hoffman

Reid Hoffman is the most legally documented independence violation on the exhibit. He co-founded LinkedIn — the professional network whose data is foundational training material for AI systems globally. He co-founded Inflection AI with Mustafa Suleyman in 2022. Microsoft licensed Inflection’s technology and hired most of its team in 2024 in a transaction widely characterized as a transaction designed in part to avoid traditional merger scrutiny — a transaction that enriched Hoffman personally and was approved by the Microsoft board on which he sits. He is a partner at Greylock Partners, which holds stakes in both OpenAI and Anthropic. He sits on Microsoft’s board with explicit committee responsibilities for responsible AI oversight — while Greylock holds an Anthropic stake that Microsoft subsequently invested five billion dollars in. In March 2026 the National Legal and Policy Center filed a formal complaint with the Securities and Exchange Commission documenting his undisclosed financial interest in a competing AI company relative to his Microsoft board responsibilities. That is not a journalistic allegation. It is a regulatory record. He resigned from OpenAI’s board in 2023 citing conflicts of interest with his AI investments. He retained his Microsoft board seat with larger conflicts intact. He resolved the smaller conflict. He kept the larger one.

Yoshua Bengio

Yoshua Bengio is the most academically credentialed entry on this exhibit and the most forensically complex. He is a full professor at Université de Montréal, founder of Mila — the world’s largest academic deep learning research institute — and the most cited living scientist across all fields on earth. He is not a billionaire. He has deliberately avoided the commercial AI investment activity that would make him one. That deliberate avoidance is itself the most important fact in his record — he is the only individual on this exhibit who made a documented institutional choice to resist the configuration the exhibit describes. He chairs the International AI Safety Report — mandated by thirty nations and representing the closest thing to independent external AI oversight that currently exists anywhere on earth. He was elected co-president of the United Nations’ first independent international scientific panel on AI in March 2026. His financial independence is real and documented. It is not absolute. He co-founded Element AI in 2016 — a commercial AI company that raised over two hundred million dollars and was acquired by ServiceNow in 2020. He serves as scientific advisor to Recursion Pharmaceuticals and Valence Discovery — both AI-driven companies that pay advisory fees. Mila has incubated over fifty commercial AI startups. LawZero — the nonprofit safety organization he founded — is funded in part by Schmidt Sciences, connected to former Google CEO Eric Schmidt. He has said publicly that he does not know if the alignment problem can be solved before transformative AI arrives. The most credentialed safety voice on the planet — the chair of the international report backed by thirty nations — stated publicly that he does not know if safety is achievable in time. That is not pessimism. That is a finding. The network continues building regardless.

Andrew Ng

Andrew Ng co-founded Google Brain in 2011 and served as Chief Scientist of Baidu from 2014 to 2017. He founded DeepLearning.AI — an education platform that has trained over eight million AI practitioners globally on curriculum he designed, on a platform he chairs, with certificates his companies issue. He is the Managing General Partner of AI Fund — a venture studio that builds and funds AI companies. He joined Amazon’s board in April 2024. Amazon has invested four billion dollars in Anthropic. He opposed California SB 1047 — the primary state-level AI safety legislation of 2024 — leading a public campaign that contributed to Governor Newsom’s veto, while holding financial stakes in AI companies that would have been regulated by it. He holds a Stanford adjunct professorship whose affiliation reinforces the blending of academic legitimacy and commercial AI influence documented throughout this exhibit. The gatekeeper finding is the most important entry in his record. Eight million future AI builders learned what AI is, what it can do, and what questions are worth asking about it from Andrew Ng’s curriculum. That curriculum was designed by a person with documented financial stakes in the commercial AI ecosystem those students will enter. The questions it teaches are the questions Andrew Ng decided are worth teaching. The questions it does not teach are the questions he decided are not. That is not a classroom. That is an epistemological configuration with eight million students inside it.

Sheryl Sandberg

Sheryl Sandberg served as Meta’s Chief Operating Officer from 2008 to 2022 — running the operational infrastructure of the company that built the social graph, the advertising targeting system, and the data architecture that AI systems now depend on for training and deployment. She left Meta’s board in January 2024. In March 2026 she joined the board of Nscale — a British AI infrastructure company that builds and operates the data centers, GPU clusters, and orchestration software that frontier AI systems run on. Nscale closed a €1.7 billion Series C funding round in the same month she joined its board. Its partners include Microsoft, NVIDIA, and OpenAI — three of the most powerful nodes in the interlocking network this exhibit documents. She could have gone anywhere. She chose the infrastructure layer underneath the entire AI ecosystem. That choice is the forensic finding. In 2025 the Delaware Chancery Court imposed sanctions on her for deleting emails from her personal account related to the Cambridge Analytica privacy scandal — emails relevant to an active shareholder lawsuit. A court-imposed sanction for destroying evidence in a governance failure did not prevent her from joining the board of a company at the center of AI infrastructure governance fourteen months later. No disclosure requirement addressed it. No governance standard prevented it. The configuration simply reformed around a new institution. She did not join the AI race. She bought the road the race runs on.

THE PIPELINE: WHO TRAINS THE NEXT GENERATION

The configuration documented in this exhibit does not sustain itself by accident. It reproduces. The same network that builds, funds, regulates, and certifies AI today is also training the engineers, researchers, and practitioners who will occupy those roles tomorrow. Andrew Ng’s DeepLearning.AI curriculum has reached over eight million students globally — on a platform he chairs, with certificates his companies issue, teaching questions he decided are worth asking. Fei-Fei Li’s Stanford HAI Global Network exports governance curricula and ethical frameworks to over twenty universities across five continents. Yoshua Bengio’s Mila has produced hundreds of PhD graduates who now hold senior positions at OpenAI, Anthropic, Google DeepMind, and Meta. The academic pipeline is not neutral infrastructure. It is the reproduction mechanism of the network. The values embedded in that curriculum — what AI is for, what questions are legitimate, what oversight looks like, what independence means — are being installed in the next generation of builders before they write their first line of professional code. By the time those students occupy the governance roles their teachers currently hold, the configuration will feel not like a structural problem but like the natural order of things. That is how a network sustains itself across generations. Not through conspiracy. Through curriculum.

THE INSTITUTIONAL LAYER: HONORABLE MENTIONS

The ten individuals documented in this exhibit are the visible nodes of a larger network. Behind them sits an institutional layer whose conflicts are structural rather than personal — embedded in corporate architecture rather than individual biography. Three corporations belong in any complete account of AI governance capture.

Alphabet — Google’s parent company — simultaneously builds frontier AI systems through Google DeepMind, funds competing AI companies through Google Ventures, participates in standards bodies, and funds academic AI research through university partnerships while deploying the systems those standards govern. It invested in Anthropic while competing with Anthropic. It employs Demis Hassabis while he advises governments on AI policy. The conflict is not in one person. It is in the balance sheet.

Microsoft holds a documented ownership stake in OpenAI while building competing internal AI systems through Microsoft AI under Mustafa Suleyman. It funds Nscale — Sheryl Sandberg’s infrastructure company — while its board member Reid Hoffman holds Greylock stakes in Anthropic, which Microsoft also invested five billion dollars in. Its government contracting relationships give it regulatory access that no independent oversight body possesses. The conflict runs through every line of its corporate structure simultaneously.

Amazon has invested four billion dollars in Anthropic. It provides the cloud infrastructure that Anthropic’s systems run on through AWS. It competes with Anthropic through its own AI development. Andrew Ng sits on its board. It is simultaneously the landlord, the investor, the competitor, and the governance participant of the same AI ecosystem. No independent standard requires it to disclose how those relationships affect its AI policy positions.

These three corporations do not appear in the exhibit because the exhibit documents individuals. They belong in this account because the individuals in the exhibit do not act in isolation. They act within institutional structures whose conflicts compound their own. The personal independence violations documented above are the branches. The corporate architecture is the root system underneath them.

THE NETWORK WITHIN THE NETWORK

The ten individuals documented above are not strangers who arrived at the same governance table independently. Reid Hoffman brokered the Microsoft-OpenAI partnership that gave Sam Altman the computing infrastructure to scale GPT-4. Demis Hassabis and Mustafa Suleyman co-founded DeepMind together before Suleyman became CEO of Microsoft AI — the company whose board Hoffman sits on. Fei-Fei Li and Andrew Ng share the Stanford AI Lab as their common institutional home. Yoshua Bengio’s foundational research at Mila trained the generation of researchers who now staff the companies Ng’s AI Fund invests in. Ilya Sutskever’s Safe Superintelligence is funded by Sequoia and Andreessen Horowitz — the same investors who hold stakes in Anthropic, where Dario Amodei sets the safety standards the industry follows. Sheryl Sandberg’s Nscale is partnered with Microsoft, NVIDIA, and OpenAI — three entities whose leadership appears elsewhere in this exhibit. The role overlaps documented in each column are the vertical dimension of the conflict. The relationships between the columns are the horizontal dimension. Together they produce not ten individual independence violations but a single interlocking structure in which every node reinforces every other node. That is what independent governance was designed to prevent. That is what does not exist here.

THE POLITICAL CLASS IS ASLEEP

The political class is not unaware. They are outpaced, outspent, and outmaneuvered. When Governor Newsom needed expertise to write California’s frontier AI policy framework, he called the people who build frontier AI. When Congress needed to understand what it was regulating, it relied heavily on testimony from the same institutions that were commercially invested in its development. When the White House needed AI safety commitments, it relied primarily on voluntary commitments from the companies deploying the systems. In each case the pattern is identical — the institution that should provide independent oversight does not have the technical staff, the institutional knowledge, or the financial independence to do so. So it outsources the expertise to the only people who have it. The people in the exhibit above. The result is not corruption in the criminal sense. It is something more durable and more dangerous — a governance structure that looks legitimate because the most credentialed people in the room are vouching for it. By the time the public understands what was decided and by whom, the foundation will have been poured. You cannot redesign a building after the concrete sets.

THE WINDOW IS CLOSING

There is one more variable the exhibit cannot show but the record makes clear. The ten individuals documented above understand precisely how fast this is moving. They have said so publicly, repeatedly, and in detail. They know that governance structures take years to build. They know that technical standards require sustained institutional development. They know that independent oversight bodies need funding, mandate, and expertise that does not yet exist. And they know that every month without an independent framework is a month in which the architecture of AI governance — its values, its boundaries, its accountability structures — is being set by the people in this exhibit. The window for independent intervention is not closing. It is being closed. Deliberately or not, the effect is the same. Speed is not neutral in a governance vacuum. It is an advantage — and the ten people above have it.

THE CLOSING

The people who build the well cannot certify the water is safe to drink. Not because they are evil. Because they cannot be objective about something they built, profit from, and will pass to their children. Every civilization that learned this lesson learned it the hard way — after the water made people sick. Banking after 1929. Accounting after Enron. Food safety after children died. Drug approval after thalidomide. The pattern is always the same. The industry governs itself until the harm is undeniable. Then the public demands independence. Then independent oversight is built — imperfectly, slowly, with industry resistance at every step. AI is at the beginning of that cycle. The well is being dug. The water is flowing. The ten are certifying it is safe. The question the old woman asked at the edge of the village has not been answered.

Who is watching the watchers?

Stay Sovereign.

Jim Germer

May 6, 2026

About Jim Germer

Jim Germer is a CPA with forty years of forensic accounting experience and the founder of The Human Choice Company. He writes about cognitive sovereignty, human agency, and the future of thinking at digitalhumanism.ai. Learn more about Jim at digitalhumanism.ai/about.

This website uses cookies.

We use cookies to analyze website traffic and optimize your website experience. By accepting our use of cookies, your data will be aggregated with all other user data.